The convergence of cloud computing and generative AI has created unprecedented opportunities—and equally unprecedented risks. As organizations race to deploy AI-powered systems in 2026, ethical data pipelines have emerged as the critical infrastructure separating responsible innovation from reputational disaster. These pipelines don’t just move data; they embed governance, transparency, and accountability into every transformation step.

According to the Cisco 2026 Data and Privacy Benchmark Study, 94% of organizations believe customers won’t buy from companies they don’t trust with data—a stark reminder that ethical considerations directly impact business outcomes. Yet most data pipelines were built for efficiency, not ethics. They prioritize speed and scale while treating privacy controls and bias mitigation as afterthoughts bolted on during compliance reviews.

The stakes have escalated dramatically with GenAI’s rise. Large language models consume massive datasets without transparent provenance tracking. Training runs process personally identifiable information across global data centers. Explainability tools struggle to decode why AI systems make specific decisions. Meanwhile, regulatory frameworks worldwide are tightening requirements for algorithmic accountability.

Ethical data pipelines represent a fundamental shift: embedding moral considerations into technical architecture from the first line of code. This approach transforms abstract principles like fairness, transparency into concrete data operations—validation rules, audit logs, and consent management—that execute automatically at scale. The question isn’t whether to build ethical pipelines, but how to architect them before the next headline-making AI incident.

Key Components of Ethical Data Pipelines

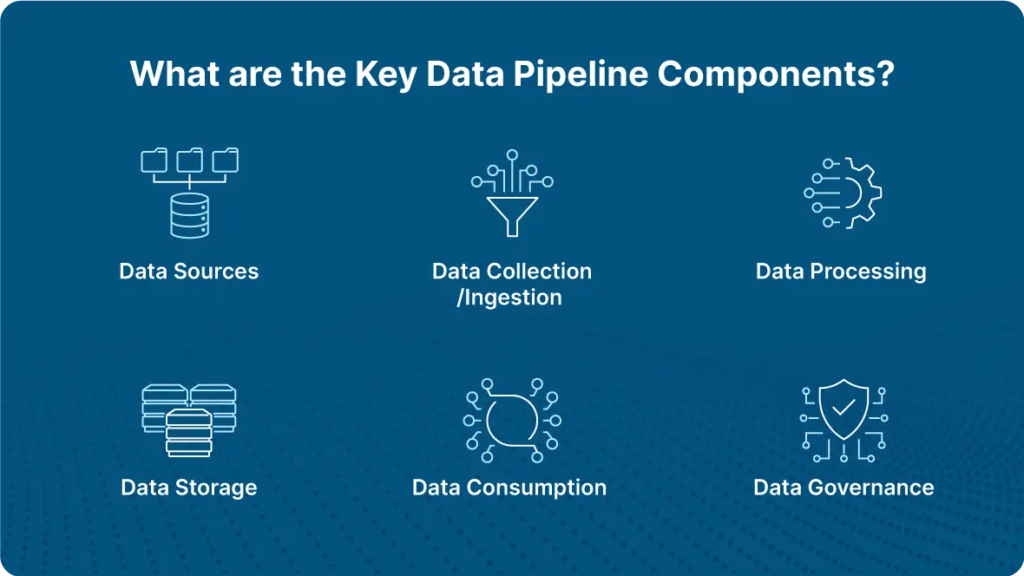

Building an ethical data pipeline requires more than good intentions—it demands a systematic approach to architecture, governance, and operational controls. At the foundation lies data governance AI, which combines traditional governance frameworks with machine learning capabilities to automate policy enforcement and detect compliance violations in real-time. In practice, this means embedding privacy and fairness checks directly into data processing workflows rather than treating them as post-deployment audits.

The architecture itself centers on three interconnected pillars: transparency mechanisms, bias detection systems, and consent management infrastructure. Transparency requires comprehensive lineage tracking that documents every transformation a data point undergoes—from collection through model training to final output. A common pattern is implementing automated logging that captures not just what happened, but why algorithmic decisions were made, enabling human oversight at critical junctures.

Synthetic data has emerged as a powerful tool for reducing privacy risks while maintaining analytical utility. By generating artificial datasets that preserve statistical properties of real data, organizations can train GenAI models without exposing sensitive information. However, research shows that synthetic data generation itself requires careful oversight to avoid amplifying existing biases or creating misleading patterns.

Access controls represent another critical component, operating at both the data layer and the model layer. What typically happens is organizations implement role-based permissions that restrict who can view raw data versus aggregated insights, coupled with audit trails that track every access event. The goal isn’t perfect security—it’s creating accountability structures that make misuse detectable and consequences enforceable.

These components work together to create pipelines that don’t just process data efficiently, but do so in ways that respect individual rights and societal values—a foundation that becomes even more critical when deployed through cloud infrastructure.

The Role of Cloud Technologies in Data Pipelines

Cloud platforms have fundamentally transformed how organizations build and operate data pipelines, offering both unprecedented scalability and complex ethical challenges. The shift from on-premises infrastructure to cloud-native architectures has made data processing more accessible—but also introduced new vulnerabilities in data governance and privacy controls.

Modern cloud architectures enable distributed data processing at massive scale, but this distribution creates fragmentation in governance oversight. Data often moves across multiple regions, jurisdictions, and service providers, each with different compliance requirements. What typically happens is that organizations lose visibility into where sensitive information resides and who has access to it. CIO research highlights that as GenAI systems consume data from multiple cloud sources, ensuring ethical data handling becomes exponentially more complex.

The advantage of cloud-based pipelines lies in their built-in security features and compliance frameworks. Leading cloud providers now offer native tools for data lineage tracking, automated access controls, and privacy-preserving computation. However, these tools are only as effective as their implementation—a common pattern is that organizations adopt cloud infrastructure without adequate governance frameworks in place. A practical approach is treating cloud infrastructure as a foundation for Responsible AI deployment rather than a complete solution. Organizations must layer their own ethical controls on top of cloud capabilities: defining clear data retention policies, implementing role-based access at granular levels, and maintaining audit trails that span multiple cloud services. The cloud provides the infrastructure; ethical data practices require intentional architecture decisions that prioritize transparency and accountability at every stage of the pipeline.

GenAI: Transforming Data Practices

Generative AI is fundamentally reshaping how organizations approach data collection, processing, and governance—making ethical data extraction both more powerful and more complex. Unlike traditional analytics that simply processes existing data, GenAI actively generates insights, predictions, content, and creating new ethical considerations at every stage of the data pipeline. The technology’s transformative impact manifests in three critical areas: automated data quality assessment, intelligent privacy preservation, and real-time compliance monitoring. A common pattern is organizations deploying GenAI models to identify sensitive information within unstructured datasets—scanning documents, emails, and communications to flag personally identifiable information before it enters the pipeline. This proactive approach catches privacy risks that rule-based systems typically miss.

However, this power introduces significant trust challenges. GenAI systems can inadvertently amplify existing biases or create new privacy vulnerabilities if not properly governed. One practical approach is implementing “dual-model” verification: using one GenAI system to generate data insights and another to audit those outputs for bias, accuracy, and compliance violations.

The most promising development is synthetic data generation—using GenAI to create realistic but entirely artificial datasets that preserve statistical patterns while eliminating individual privacy concerns. What typically happens is organizations train models on real data, then use those models to generate synthetic alternatives for testing, development, and external sharing.

Looking ahead, the key to ethical data extraction with GenAI lies in treating these systems not as black boxes, but as transparent tools requiring continuous oversight. The technology demands new governance frameworks that can evolve as quickly as the models themselves.

Strategic Context & Business Drivers

| Factor | Insight | Business Impact |

| Customer Trust | 94% of customers won’t buy from companies they don’t trust with data | Ethics directly affects revenue |

| Rise of GenAI | Massive datasets consumed without clear provenance | Increased accountability risk |

| Regulatory Pressure | Tightening global AI and privacy regulations | Mandatory compliance architecture |

| Cloud Adoption | Distributed data processing across regions | Governance complexity increases |

| Reputational Risk | AI failures create public backlash | Ethics becomes strategic priority |

Example Scenarios: Implementing Ethical Data Pipelines

Translating ethical principles into operational data pipelines requires concrete implementation patterns. A common scenario involves an e-commerce platform using GenAI to personalize product recommendations while maintaining customer privacy. In practice, the organization implements a federated learning approach where models train on decentralized customer data without centralizing sensitive information in a single repository. The pipeline encrypts data at rest and in transit, applies differential privacy techniques to aggregate insights, and maintains detailed audit logs of all data access patterns.

Another practical approach involves healthcare analytics, where organizations must balance AI-driven insights with HIPAA compliance. What typically happens is that teams implement AI governance frameworks that automate compliance checks throughout the pipeline. These frameworks validate data anonymization before processing, enforce role-based access controls that limit exposure to protected health information, and create immutable audit trails documenting every transformation. Cisco’s 2026 Data and Privacy Benchmark Study reveals that organizations with mature data governance practices report 80% fewer privacy incidents than those without formal frameworks.

Financial services present another compelling pattern. Banks implementing real-time fraud detection must process transaction data immediately while respecting data residency requirements across multiple jurisdictions. A well-designed ethical pipeline segments data geographically, processes region-specific information within compliant cloud zones, and applies GenAI models that explain their fraud detection reasoning to satisfy regulatory transparency requirements. The key insight: ethical data pipelines succeed when technical architecture aligns with compliance requirements from day one, not as an afterthought.

However, even well-intentioned implementations face challenges when scaling or adapting to new regulatory landscapes.

Limitations and Considerations

Despite the transformative potential of ethical data pipelines enhanced by GenAI, organizations face significant technical and operational constraints that require careful navigation. Understanding these limitations helps set realistic expectations while building resilient systems.

Technical Constraints and Resource Requirements

GenAI models demand substantial computational resources that strain existing infrastructure. A common pattern is organizations discovering their current cloud architecture cannot handle the processing loads required for real-time ethical oversight. Cybersecurity planning for 2026 emphasizes how resource constraints force difficult trade-offs between model sophistication and operational efficiency. Organizations typically find themselves choosing between comprehensive ethical monitoring or faster processing speeds—rarely achieving both optimally.

The Challenge of Algorithmic Bias

While GenAI enhances data governance, it simultaneously introduces new forms of bias. Bias detection mechanisms themselves can perpetuate existing prejudices when trained on historical data that reflects systemic inequities. What typically happens is organizations implement sophisticated bias detection only to discover their detection algorithms carry forward biases from training data. One practical approach is implementing multi-layered validation where human oversight checks algorithmic decisions, though this adds complexity and latency.

Evolving Regulatory Complexity

The regulatory landscape shifts faster than most organizations can adapt their pipelines. Responsible AI implementation research notes how organizations struggle with conflicting requirements across jurisdictions—a pipeline compliant in one region may violate regulations elsewhere. This creates ongoing maintenance burdens where teams constantly update rules engines and consent frameworks.

These limitations don’t invalidate ethical data pipelines but rather frame realistic implementation boundaries. As the field matures, addressing these constraints becomes increasingly critical.

Future Trends in Ethical Data Practices

The convergence of regulatory pressure, technological capability, and market demand is reshaping ethical data practices heading into 2026 and beyond. Organizations that anticipate these shifts will gain competitive advantages while those playing catch-up face increasing friction.

Privacy-Enhancing Technologies Become Standard

Privacy-enhancing technologies (PETs) are transitioning from experimental tools to production-grade infrastructure. Techniques like federated learning—which enables model training across distributed datasets without centralizing sensitive information—and homomorphic encryption that allows computation on encrypted data are moving from research labs into enterprise pipelines. These approaches address a fundamental tension: extracting analytical value while minimizing data exposure. For regulated industries like healthcare, financial services, and PETs offer pathways to collaboration that previously seemed impossible due to compliance barriers.

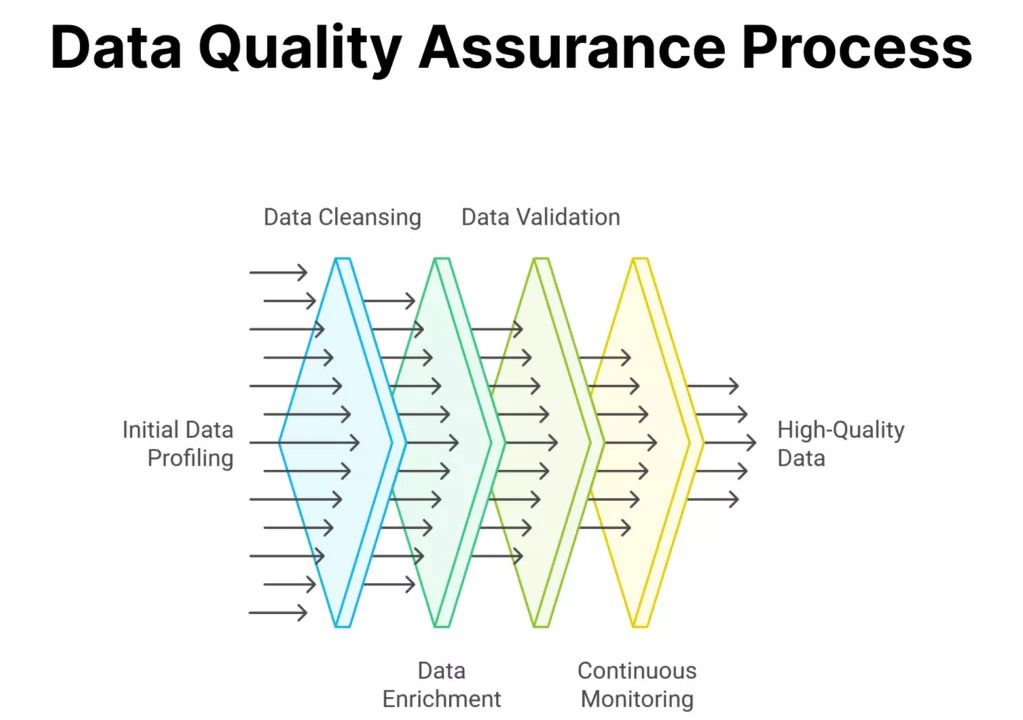

Continuous Data Quality Assurance and Ethics Monitoring

Static compliance checks are giving way to continuous monitoring systems that track both technical performance and ethical alignment in real-time. Modern pipelines integrate data quality assurance mechanisms that validate accuracy, completeness, and representativeness at every transformation stage. Beyond traditional data quality metrics, organizations are implementing bias detection algorithms that flag statistical anomalies suggesting discriminatory patterns. According to Kellton’s analysis of AI trends, automated governance frameworks that combine technical validation with ethical assessment will become essential infrastructure rather than optional enhancements.

From Compliance to Competitive Differentiation

The most forward-thinking organizations are repositioning ethical data practices from cost centers to value drivers. What happens when privacy becomes a product feature customers actively choose? Companies demonstrating verifiable ethical practices—through third-party audits, transparent reporting, and user-controlled data governance—are differentiating themselves in crowded markets. This shift transforms ethical pipelines from regulatory requirements into strategic assets that build customer trust and enable premium positioning.

Key Takeaways

Building ethical data pipelines enhanced by GenAI requires a fundamental shift in how organizations approach data governance, transparency, and accountability. The convergence of cloud infrastructure, artificial intelligence, and privacy-first design creates unprecedented opportunities for organizations to demonstrate their commitment to responsible data practices while delivering measurable business value.

Audit trails AI systems represent the operational backbone of accountability—ensuring every data transformation, access event, and model decision remains traceable and explainable. These systems don’t just satisfy compliance requirements; they build the foundational trust necessary for customers, partners, and regulators to engage confidently with AI-powered services.

The path forward demands intentional choices: implementing privacy-by-design principles rather than retrofitting compliance, selecting cloud partners who share ethical commitments, and investing in continuous monitoring systems that detect drift before it becomes damage. Organizations that treat ethics as a competitive differentiator rather than a checkbox exercise will capture both market share and customer loyalty in an increasingly privacy-conscious landscape.

What typically happens next separates leaders from laggards. Winners conduct regular ethical impact assessments, maintain cross-functional ethics committees, and publish transparency reports that detail both successes and failures. They recognize that perfect systems don’t exist, but honest, iterative improvement builds credibility.Start small, think big: identify one high-impact data pipeline, implement comprehensive monitoring and governance controls, measure the business outcomes, then scale proven patterns across your organization. The ethical data practices you implement today will define your competitive position tomorrow.

FAQ’s

What is a cloud data pipeline?

A cloud data pipeline is a scalable system that collects, processes, transforms, and moves data across cloud platforms in real time or batches. It enables organizations to power analytics, AI, and GenAI applications efficiently while ensuring governance, security, and compliance.

What is the difference between data pipeline and AI pipeline?

A data pipeline focuses on collecting, cleaning, transforming, and moving data for storage or analytics, while an AI pipeline goes further by using that processed data to train, validate, deploy, and monitor machine learning or AI models. In short, data pipelines prepare the data, and AI pipelines turn that data into intelligent predictions and automation.

What are the main 3 stages in a data pipeline?

The three main stages in a data pipeline are Data Ingestion (collecting data from various sources), Data Processing/Transformation (cleaning and transforming the data), and Data Storage or Loading (storing it in databases or data warehouses for analysis and AI use).

Which 3 jobs will survive AI?

Three jobs most likely to survive and evolve with AI are Healthcare Professionals (because empathy and complex human care can’t be automated), Skilled Trades Professionals (electricians, plumbers, technicians requiring physical expertise), and AI & Data Specialists who design, manage, and govern intelligent systems.

What are the 4 pipeline stages?

The four main pipeline stages are Ingestion (collecting data), Processing (cleaning and transforming data), Storage (saving data in databases or warehouses), and Analysis/Consumption (using the data for analytics, reporting, or AI applications).