Data Science

Data Science combines statistical analysis, machine learning, and domain expertise to extract meaningful insights from data. Explore the latest advancements, techniques, and applications in our Data Science blog posts below.

As a rapidly evolving field, Data Science is at the forefront of innovation in technology and business. From predictive modeling to natural language processing, data science techniques are transforming industries and driving new discoveries.

How does Data Science drive innovation and business growth?

Find the related blogs below to explore how Data Science drives innovation and business growth.

Related Blogs

- Data Science: Top Resources and Free Learning Opportunities

by matthewData Science has become one of the most sought-after fields in the world today. With applications across various industries like healthcare, finance, retail, and marketing, data science is revolutionizing the way we make decisions, solve problems, and understand the world around us. For those interested in entering this exciting and lucrative field, the first question often is: how do I begin? Fortunately, with the advent of numerous online resources, many of which are free, aspiring data scientists now have more opportunities than ever to get started. In this article, we will explore what Data Science is, why it’s so crucial… Read more: Data Science: Top Resources and Free Learning Opportunities

by matthewData Science has become one of the most sought-after fields in the world today. With applications across various industries like healthcare, finance, retail, and marketing, data science is revolutionizing the way we make decisions, solve problems, and understand the world around us. For those interested in entering this exciting and lucrative field, the first question often is: how do I begin? Fortunately, with the advent of numerous online resources, many of which are free, aspiring data scientists now have more opportunities than ever to get started. In this article, we will explore what Data Science is, why it’s so crucial… Read more: Data Science: Top Resources and Free Learning Opportunities - Data Engineering: A Comprehensive Guide

by matthewIntroduction In today’s digital world, data is considered the new oil. Organizations rely on vast amounts of data to make informed decisions, improve operations, and drive business growth. However, raw data is often unstructured, scattered across multiple sources, and difficult to analyze. This is where data engineering comes into play. Data engineering is the process of designing, building, and managing the infrastructure that allows for the efficient collection, storage, and processing of data. This comprehensive guide will explore what data engineering is, its key components, the tools used, best practices, and its role in the broader field of data science… Read more: Data Engineering: A Comprehensive Guide

by matthewIntroduction In today’s digital world, data is considered the new oil. Organizations rely on vast amounts of data to make informed decisions, improve operations, and drive business growth. However, raw data is often unstructured, scattered across multiple sources, and difficult to analyze. This is where data engineering comes into play. Data engineering is the process of designing, building, and managing the infrastructure that allows for the efficient collection, storage, and processing of data. This comprehensive guide will explore what data engineering is, its key components, the tools used, best practices, and its role in the broader field of data science… Read more: Data Engineering: A Comprehensive Guide - Descriptive Statistics Overview

by matthewIntroduction to Descriptive Statistics Descriptive statistics is a fundamental aspect of data analysis that helps in summarizing and interpreting large datasets in a meaningful way. It involves the use of various statistical tools to describe and organize data, making it easier to understand patterns and trends. Unlike inferential statistics, which focuses on making predictions or generalizations about a population based on a sample, descriptive statistics merely provides a snapshot of the given dataset. Understanding descriptive statistics is crucial for researchers, analysts, and businesses, as it provides a foundation for data-driven decision-making. This branch of statistics enables the representation of data… Read more: Descriptive Statistics Overview

by matthewIntroduction to Descriptive Statistics Descriptive statistics is a fundamental aspect of data analysis that helps in summarizing and interpreting large datasets in a meaningful way. It involves the use of various statistical tools to describe and organize data, making it easier to understand patterns and trends. Unlike inferential statistics, which focuses on making predictions or generalizations about a population based on a sample, descriptive statistics merely provides a snapshot of the given dataset. Understanding descriptive statistics is crucial for researchers, analysts, and businesses, as it provides a foundation for data-driven decision-making. This branch of statistics enables the representation of data… Read more: Descriptive Statistics Overview - Efficient Techniques for Data Reconciliation

by matthewIntroduction In today’s data-driven world, businesses rely on vast amounts of data for decision-making, financial reporting, and operational efficiency. However, inconsistencies, errors, and discrepancies in data often pose challenges, leading to incorrect insights and poor business decisions. Data reconciliation is the process of ensuring data accuracy and consistency across different systems, sources, and databases. It plays a critical role in finance, accounting, supply chain management, and various other industries where data integrity is paramount. This article explores the best techniques for data reconciliation and how organizations can implement them efficiently. Understanding Data Reconciliation Data reconciliation refers to the process of… Read more: Efficient Techniques for Data Reconciliation

by matthewIntroduction In today’s data-driven world, businesses rely on vast amounts of data for decision-making, financial reporting, and operational efficiency. However, inconsistencies, errors, and discrepancies in data often pose challenges, leading to incorrect insights and poor business decisions. Data reconciliation is the process of ensuring data accuracy and consistency across different systems, sources, and databases. It plays a critical role in finance, accounting, supply chain management, and various other industries where data integrity is paramount. This article explores the best techniques for data reconciliation and how organizations can implement them efficiently. Understanding Data Reconciliation Data reconciliation refers to the process of… Read more: Efficient Techniques for Data Reconciliation - Comprehensive Guide to Descriptive Statistics: 8 Essential Insights

by Durgesh KekareIntroduction to Descriptive Statistics Descriptive statistics provide a simple summary of the sample and the measures. They form the foundation of quantitative data analysis by summarizing data to understand patterns, trends, and general insights. These statistics are essential for data scientists, analysts, and researchers who need to interpret and present data meaningfully. In the world of data analytics, descriptive statistics play a vital role in the initial phase of data analysis. They help in transforming raw data into meaningful information, paving the way for further analysis and decision-making. The primary goal is to provide insights into the data’s structure, variability,… Read more: Comprehensive Guide to Descriptive Statistics: 8 Essential Insights

by Durgesh KekareIntroduction to Descriptive Statistics Descriptive statistics provide a simple summary of the sample and the measures. They form the foundation of quantitative data analysis by summarizing data to understand patterns, trends, and general insights. These statistics are essential for data scientists, analysts, and researchers who need to interpret and present data meaningfully. In the world of data analytics, descriptive statistics play a vital role in the initial phase of data analysis. They help in transforming raw data into meaningful information, paving the way for further analysis and decision-making. The primary goal is to provide insights into the data’s structure, variability,… Read more: Comprehensive Guide to Descriptive Statistics: 8 Essential Insights - 12 Crucial Steps for Effective Time Series Analysis in Business Forecasting

by Durgesh KekareIntroduction to Time Series Analysis Time series analysis is a statistical technique that deals with time-ordered data points. Businesses can uncover patterns, trends, and seasonal variations by analyzing data points collected or recorded at specific time intervals, making it an invaluable tool for forecasting and strategic planning. What is Time Series Analysis? Time series analysis involves using various statistical methods to analyze time-ordered data. This type of analysis helps understand the underlying patterns of data over time, identifying trends, seasonal effects, and cyclic patterns. Through time series analysis, businesses can predict future values based on previously observed values. To answer… Read more: 12 Crucial Steps for Effective Time Series Analysis in Business Forecasting

by Durgesh KekareIntroduction to Time Series Analysis Time series analysis is a statistical technique that deals with time-ordered data points. Businesses can uncover patterns, trends, and seasonal variations by analyzing data points collected or recorded at specific time intervals, making it an invaluable tool for forecasting and strategic planning. What is Time Series Analysis? Time series analysis involves using various statistical methods to analyze time-ordered data. This type of analysis helps understand the underlying patterns of data over time, identifying trends, seasonal effects, and cyclic patterns. Through time series analysis, businesses can predict future values based on previously observed values. To answer… Read more: 12 Crucial Steps for Effective Time Series Analysis in Business Forecasting - 12 Innovative Data Science Projects for 2024: Transforming Ideas into Reality

by Durgesh KekareIntroduction to Data Science Projects Data science projects are essential for transforming raw data into actionable insights. These projects help solve complex problems and drive innovation by leveraging various data science techniques and tools. In 2024, the scope and impact of data science projects will continue growing, offering new opportunities for beginners and experienced professionals. Why Data Science Projects Matter Data science projects are not just about analyzing data; they are about solving real-world problems. They provide hands-on experience, improve analytical skills, and demonstrate the practical applications of data science. These projects also bridge the gap between data science and… Read more: 12 Innovative Data Science Projects for 2024: Transforming Ideas into Reality

by Durgesh KekareIntroduction to Data Science Projects Data science projects are essential for transforming raw data into actionable insights. These projects help solve complex problems and drive innovation by leveraging various data science techniques and tools. In 2024, the scope and impact of data science projects will continue growing, offering new opportunities for beginners and experienced professionals. Why Data Science Projects Matter Data science projects are not just about analyzing data; they are about solving real-world problems. They provide hands-on experience, improve analytical skills, and demonstrate the practical applications of data science. These projects also bridge the gap between data science and… Read more: 12 Innovative Data Science Projects for 2024: Transforming Ideas into Reality - What is Data Science? A Comprehensive Beginner’s Guide

by Durgesh KekareIntroduction to Data Science Data Science is an interdisciplinary field that uses scientific methods, processes, algorithms, and systems to extract knowledge and insights from structured and unstructured data. It combines facets of statistics, data analysis, machine learning, and their related methods to understand and analyze actual phenomena with data. Data-Science is the extraction of knowledge from data, which involves a wide spectrum of tools and techniques to understand vast amounts of information. This guide introduces the fundamentals of Data Science, exploring how this discipline powers decision-making across sectors by deriving actionable insights from raw data. The Pillars of Data Science… Read more: What is Data Science? A Comprehensive Beginner’s Guide

by Durgesh KekareIntroduction to Data Science Data Science is an interdisciplinary field that uses scientific methods, processes, algorithms, and systems to extract knowledge and insights from structured and unstructured data. It combines facets of statistics, data analysis, machine learning, and their related methods to understand and analyze actual phenomena with data. Data-Science is the extraction of knowledge from data, which involves a wide spectrum of tools and techniques to understand vast amounts of information. This guide introduces the fundamentals of Data Science, exploring how this discipline powers decision-making across sectors by deriving actionable insights from raw data. The Pillars of Data Science… Read more: What is Data Science? A Comprehensive Beginner’s Guide - Data Preprocessing in Depth: Advanced Techniques for Data Scientists

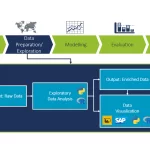

by Durgesh KekareIntroduction to Data Preprocessing Data preprocessing is a fundamental step in the data science pipeline, crucial for transforming raw data into a clean, accurate, and usable format. This blog delves into advanced data preprocessing techniques that every data scientists should master to unlock deeper insights and achieve optimal results in their analyses and machine learning models. The Crucial Role of Data Preprocessing for Data Scientists For data scientists, data preprocessing is not just a preliminary step but a strategic phase that significantly influences the outcome of data projects. It’s about ensuring data integrity, enhancing model accuracy, and extracting the most… Read more: Data Preprocessing in Depth: Advanced Techniques for Data Scientists

by Durgesh KekareIntroduction to Data Preprocessing Data preprocessing is a fundamental step in the data science pipeline, crucial for transforming raw data into a clean, accurate, and usable format. This blog delves into advanced data preprocessing techniques that every data scientists should master to unlock deeper insights and achieve optimal results in their analyses and machine learning models. The Crucial Role of Data Preprocessing for Data Scientists For data scientists, data preprocessing is not just a preliminary step but a strategic phase that significantly influences the outcome of data projects. It’s about ensuring data integrity, enhancing model accuracy, and extracting the most… Read more: Data Preprocessing in Depth: Advanced Techniques for Data Scientists - Beyond the Basics: Advanced Deep Learning in Complex Applications

by Durgesh KekareIntroduction to Advanced Deep Learning As we delve into the realms beyond basic neural networks, deep learning reveals its profound capability to transform industries, innovate solutions, and unravel complexities within vast pools of data. This blog explores the nuanced applications of deep learning, showcasing how this technology is not just an evolution but a revolution in the field of artificial intelligence. Advanced deep learning extends beyond foundational models and algorithms, venturing into the development of systems capable of understanding, learning, and making decisions with minimal human intervention. This advanced tier of deep learning paves the way for machines to tackle… Read more: Beyond the Basics: Advanced Deep Learning in Complex Applications

by Durgesh KekareIntroduction to Advanced Deep Learning As we delve into the realms beyond basic neural networks, deep learning reveals its profound capability to transform industries, innovate solutions, and unravel complexities within vast pools of data. This blog explores the nuanced applications of deep learning, showcasing how this technology is not just an evolution but a revolution in the field of artificial intelligence. Advanced deep learning extends beyond foundational models and algorithms, venturing into the development of systems capable of understanding, learning, and making decisions with minimal human intervention. This advanced tier of deep learning paves the way for machines to tackle… Read more: Beyond the Basics: Advanced Deep Learning in Complex Applications - Reinforcement Learning: The Next Step After Supervised Learning

by Durgesh KekareIntroduction to Reinforcement Learning In the realm of artificial intelligence, reinforcement learning (RL) emerges as a powerful paradigm, distinguished from its supervised and unsupervised counterparts. This blog delves into the essence of reinforcement learning, marking it as a crucial step beyond supervised learning in the AI evolution journey. Beyond the basics, reinforcement learning embodies a paradigm where machines not only predict but also act, learning from the consequences of their actions in a dynamic environment. This approach, drawing inspiration from the way humans and animals learn from their experiences, represents a significant leap in the field of artificial intelligence, enabling… Read more: Reinforcement Learning: The Next Step After Supervised Learning

by Durgesh KekareIntroduction to Reinforcement Learning In the realm of artificial intelligence, reinforcement learning (RL) emerges as a powerful paradigm, distinguished from its supervised and unsupervised counterparts. This blog delves into the essence of reinforcement learning, marking it as a crucial step beyond supervised learning in the AI evolution journey. Beyond the basics, reinforcement learning embodies a paradigm where machines not only predict but also act, learning from the consequences of their actions in a dynamic environment. This approach, drawing inspiration from the way humans and animals learn from their experiences, represents a significant leap in the field of artificial intelligence, enabling… Read more: Reinforcement Learning: The Next Step After Supervised Learning - 5 Essential Steps in Feature Engineering for Enhanced Predictive Models

by Durgesh KekareIntroduction Welcome to our in-depth exploration of feature engineering, a crucial aspect of building effective predictive models in data science. This blog will delve into the art and science of feature engineering, discussing how the right techniques can significantly enhance the performance of machine learning models. We’ll explore various methods of transforming and manipulating data to better suit the needs of algorithms, thereby unlocking the full potential of predictive modeling. Understanding the Basics of Feature Engineering Feature engineering involves creating new features from existing data and selecting the most relevant features for use in predictive models. It’s a critical step… Read more: 5 Essential Steps in Feature Engineering for Enhanced Predictive Models

by Durgesh KekareIntroduction Welcome to our in-depth exploration of feature engineering, a crucial aspect of building effective predictive models in data science. This blog will delve into the art and science of feature engineering, discussing how the right techniques can significantly enhance the performance of machine learning models. We’ll explore various methods of transforming and manipulating data to better suit the needs of algorithms, thereby unlocking the full potential of predictive modeling. Understanding the Basics of Feature Engineering Feature engineering involves creating new features from existing data and selecting the most relevant features for use in predictive models. It’s a critical step… Read more: 5 Essential Steps in Feature Engineering for Enhanced Predictive Models - 5 Vital Metrics: Excelling in ML Model Performance

by Durgesh KekareIntroduction Welcome to our in-depth exploration of model evaluation in machine learning. This blog delves into the critical metrics and methodologies used to assess the performance of ML models. Understanding these metrics is essential for data professionals to ensure their models are accurate, reliable, and effective. Accuracy: The Starting Point in ML Model Evaluation Accuracy is a fundamental metric in ML model evaluation, measuring the proportion of correct predictions made by the model. While accuracy is a straightforward and intuitive metric, it can be misleading in cases of imbalanced datasets where one class significantly outnumbers the other. For instance, in… Read more: 5 Vital Metrics: Excelling in ML Model Performance

by Durgesh KekareIntroduction Welcome to our in-depth exploration of model evaluation in machine learning. This blog delves into the critical metrics and methodologies used to assess the performance of ML models. Understanding these metrics is essential for data professionals to ensure their models are accurate, reliable, and effective. Accuracy: The Starting Point in ML Model Evaluation Accuracy is a fundamental metric in ML model evaluation, measuring the proportion of correct predictions made by the model. While accuracy is a straightforward and intuitive metric, it can be misleading in cases of imbalanced datasets where one class significantly outnumbers the other. For instance, in… Read more: 5 Vital Metrics: Excelling in ML Model Performance - 5 Essential Data Preprocessing Techniques: Data Cleaning and Preparing Your Data for Analysis

by Durgesh KekareIntroduction Welcome to our comprehensive guide on data preprocessing techniques, a crucial step in the data analysis process. In this blog, we explore the importance of data cleaning and preparing your data, ensuring it’s ready for insightful analysis. We’ll delve into five key preprocessing techniques that are essential for any data scientist or analyst. Data Cleaning: Removing Inaccuracies and Irregularities Data cleaning involves identifying and correcting errors and inconsistencies in your data to improve its quality and accuracy. Effective data cleaning not only rectifies inaccuracies but also ensures consistency across datasets. This process can involve dealing with anomalies, duplicate entries,… Read more: 5 Essential Data Preprocessing Techniques: Data Cleaning and Preparing Your Data for Analysis

by Durgesh KekareIntroduction Welcome to our comprehensive guide on data preprocessing techniques, a crucial step in the data analysis process. In this blog, we explore the importance of data cleaning and preparing your data, ensuring it’s ready for insightful analysis. We’ll delve into five key preprocessing techniques that are essential for any data scientist or analyst. Data Cleaning: Removing Inaccuracies and Irregularities Data cleaning involves identifying and correcting errors and inconsistencies in your data to improve its quality and accuracy. Effective data cleaning not only rectifies inaccuracies but also ensures consistency across datasets. This process can involve dealing with anomalies, duplicate entries,… Read more: 5 Essential Data Preprocessing Techniques: Data Cleaning and Preparing Your Data for Analysis - Deep Learning Fundamentals: Neural Networks and Applications

by Durgesh KekareIntroduction Welcome to the fascinating world of Deep Learning, a subset of machine learning that’s transforming how we interact with technology. This blog, will guide you through the basics of neural networks and their diverse applications. We’ll explore how these advanced algorithms mimic the human brain to solve complex problems, making significant impacts across various industries. Our focus keyword, ‘Deep Learning’, is central to this exploration. It’s a field that’s not just about programming or data – it’s about understanding and leveraging the power of neural networks to create intelligent systems. As we delve into this topic, we’ll use real-time… Read more: Deep Learning Fundamentals: Neural Networks and Applications

by Durgesh KekareIntroduction Welcome to the fascinating world of Deep Learning, a subset of machine learning that’s transforming how we interact with technology. This blog, will guide you through the basics of neural networks and their diverse applications. We’ll explore how these advanced algorithms mimic the human brain to solve complex problems, making significant impacts across various industries. Our focus keyword, ‘Deep Learning’, is central to this exploration. It’s a field that’s not just about programming or data – it’s about understanding and leveraging the power of neural networks to create intelligent systems. As we delve into this topic, we’ll use real-time… Read more: Deep Learning Fundamentals: Neural Networks and Applications - Supervised Learning vs. Unsupervised Learning: Choosing the Right Approach

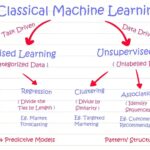

by Durgesh KekareIntroduction Embarking on the journey of machine learning can often lead to the crossroads of choosing between Supervised and Unsupervised Learning. This blog delves into the nuances of both approaches, guiding you to make an informed decision for your specific needs. We’ll explore the strengths, applications, and real-time examples of each, ensuring a comprehensive understanding of these fundamental machine-learning techniques. Our focus keyword, ‘Supervised vs. Unsupervised Learning’, is central to this discussion, providing insights into the world of artificial intelligence and data science. As we unfold the layers of these methodologies, we will see their impact and relevance in various… Read more: Supervised Learning vs. Unsupervised Learning: Choosing the Right Approach

by Durgesh KekareIntroduction Embarking on the journey of machine learning can often lead to the crossroads of choosing between Supervised and Unsupervised Learning. This blog delves into the nuances of both approaches, guiding you to make an informed decision for your specific needs. We’ll explore the strengths, applications, and real-time examples of each, ensuring a comprehensive understanding of these fundamental machine-learning techniques. Our focus keyword, ‘Supervised vs. Unsupervised Learning’, is central to this discussion, providing insights into the world of artificial intelligence and data science. As we unfold the layers of these methodologies, we will see their impact and relevance in various… Read more: Supervised Learning vs. Unsupervised Learning: Choosing the Right Approach - Data Exploration and Visualization: Uncovering Hidden Patterns

by Durgesh KekareIn the realm of data science, the journey toward informed decision-making begins with two crucial pillars: data exploration and visualization. These foundational steps are not merely introductory; they are integral to the entire data analysis process. By delving deeper into data exploration and visualization, we can uncover concealed patterns, draw meaningful insights, and unlock the potential of data-driven decision-making. Introduction to Data Exploration Data exploration serves as the essential precursor to any data analysis endeavor. It involves an initial, in-depth investigation of the dataset’s characteristics, structure, and nuances. This phase of the data science journey is marked by several key… Read more: Data Exploration and Visualization: Uncovering Hidden Patterns

by Durgesh KekareIn the realm of data science, the journey toward informed decision-making begins with two crucial pillars: data exploration and visualization. These foundational steps are not merely introductory; they are integral to the entire data analysis process. By delving deeper into data exploration and visualization, we can uncover concealed patterns, draw meaningful insights, and unlock the potential of data-driven decision-making. Introduction to Data Exploration Data exploration serves as the essential precursor to any data analysis endeavor. It involves an initial, in-depth investigation of the dataset’s characteristics, structure, and nuances. This phase of the data science journey is marked by several key… Read more: Data Exploration and Visualization: Uncovering Hidden Patterns - Introduction: Unveiling the Magic of Machine Learning

by Durgesh KekareMachine learning, often abbreviated as ML, is a captivating field that combines the realms of computer science and artificial intelligence. At its core, ML revolves around the idea of teaching computers to learn from data and make decisions or predictions based on that acquired knowledge. In this in-depth beginner’s guide, we are set to unravel the mysteries of machine learning, explore its wide-ranging applications, and provide you with the essential knowledge to embark on your own ML journey with confidence. Understanding the Essence of Machine Learning Let’s start at the very beginning. What exactly is machine learning? ML stands as… Read more: Introduction: Unveiling the Magic of Machine Learning

by Durgesh KekareMachine learning, often abbreviated as ML, is a captivating field that combines the realms of computer science and artificial intelligence. At its core, ML revolves around the idea of teaching computers to learn from data and make decisions or predictions based on that acquired knowledge. In this in-depth beginner’s guide, we are set to unravel the mysteries of machine learning, explore its wide-ranging applications, and provide you with the essential knowledge to embark on your own ML journey with confidence. Understanding the Essence of Machine Learning Let’s start at the very beginning. What exactly is machine learning? ML stands as… Read more: Introduction: Unveiling the Magic of Machine Learning - Traditional Analytics vs. Data Science: 7 Things You Should Know

by Durgesh KekareIn the realm of data and analytics, we are amid a profound transformation. Data science, with its advanced methodologies and tools, is heralding a new era. The days of traditional analytics are giving way to a data-driven revolution that empowers organizations with deeper insights, more accurate predictions, and smarter decisions. The Limitations of Traditional Analytics Traditional analytics, while valuable, has its limitations. It primarily deals with historical data, focusing on past performance. It’s akin to driving while looking in the rearview mirror. This approach, although essential, often falls short when the goal is to anticipate future trends and take proactive… Read more: Traditional Analytics vs. Data Science: 7 Things You Should Know

by Durgesh KekareIn the realm of data and analytics, we are amid a profound transformation. Data science, with its advanced methodologies and tools, is heralding a new era. The days of traditional analytics are giving way to a data-driven revolution that empowers organizations with deeper insights, more accurate predictions, and smarter decisions. The Limitations of Traditional Analytics Traditional analytics, while valuable, has its limitations. It primarily deals with historical data, focusing on past performance. It’s akin to driving while looking in the rearview mirror. This approach, although essential, often falls short when the goal is to anticipate future trends and take proactive… Read more: Traditional Analytics vs. Data Science: 7 Things You Should Know - 7 Preeminent Data Science Tools and Skills for Excellence

by Durgesh KekareIn the ever-evolving landscape of data science, one thing remains constant: the importance of the data scientist’s toolkit. Data scientists are the modern-day alchemists, turning raw data into valuable insights that power decision-making and innovation. In this blog, we’ll dive deep into the essential skills and tools that equip data scientists for success. Understanding Data Science At its core, data science is the art of transforming raw data into actionable insights. It’s a multidisciplinary field that combines expertise in statistics, programming, and domain knowledge. Here are the fundamental skills and tools that every data scientist should have in their toolkit:… Read more: 7 Preeminent Data Science Tools and Skills for Excellence

by Durgesh KekareIn the ever-evolving landscape of data science, one thing remains constant: the importance of the data scientist’s toolkit. Data scientists are the modern-day alchemists, turning raw data into valuable insights that power decision-making and innovation. In this blog, we’ll dive deep into the essential skills and tools that equip data scientists for success. Understanding Data Science At its core, data science is the art of transforming raw data into actionable insights. It’s a multidisciplinary field that combines expertise in statistics, programming, and domain knowledge. Here are the fundamental skills and tools that every data scientist should have in their toolkit:… Read more: 7 Preeminent Data Science Tools and Skills for Excellence