Data science doesn’t reward guesswork. When businesses make decisions based on incomplete or misleading statistical analysis, the consequences ripple through every department — from product development to revenue forecasting. That’s exactly where ANOVA earns its reputation as an indispensable tool in any serious analyst’s toolkit.

The global data science market is expanding at a relentless pace, and with that growth comes an intensifying demand for statistical rigor. Organizations are no longer satisfied with surface-level insights. They need analysts who can extract reliable conclusions from messy, multi-group datasets — and do it with confidence.

Here’s a problem that comes up constantly in practice: simple mean comparisons work reasonably well when you’re evaluating two groups. But what happens when you need to compare customer satisfaction scores across five regional markets? Or measure the performance of four different marketing campaigns simultaneously? Running multiple two-group comparisons isn’t just inefficient — it dramatically inflates the risk of false positives, essentially manufacturing statistical significance where none exists.

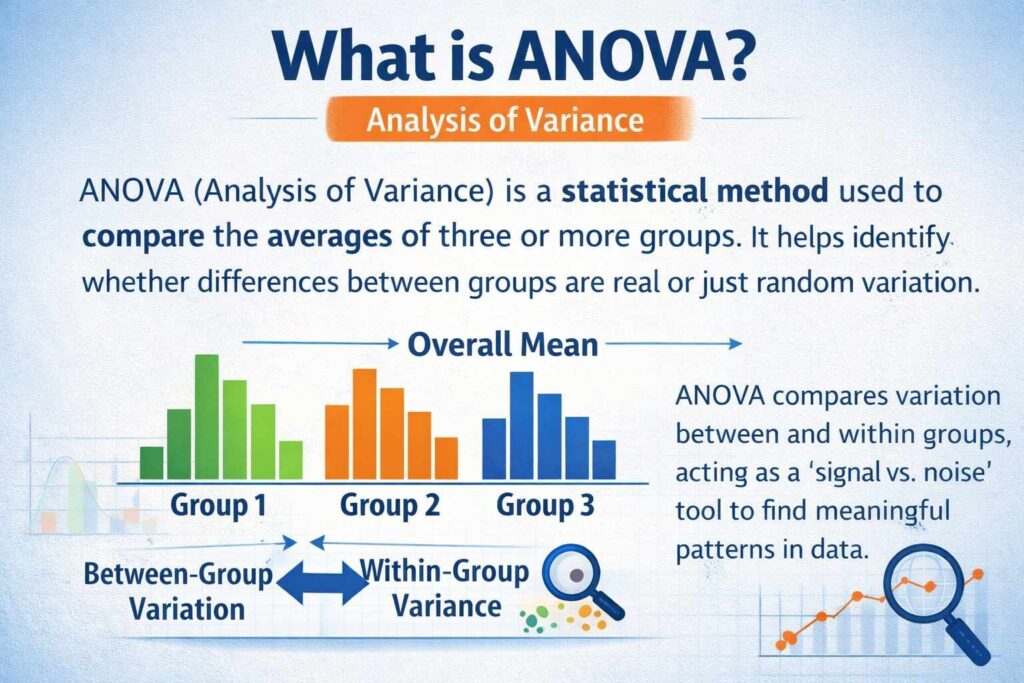

ANOVA cuts through this problem by functioning as a “signal vs. noise” detector. It evaluates whether the variation between your groups is meaningfully larger than the variation within them — separating genuine patterns from random fluctuation in one efficient test.

In complex, real-world environments where dozens of variables compete for attention, that distinction is everything. Understanding how ANOVA accomplishes this, however, requires unpacking a concept that surprises many beginners: why analyzing variance is the smartest path to understanding means.

What is Analysis of Variance (ANOVA)? Meaning and Core Concepts

At its core, Analysis of Variance (ANOVA) is a statistical method used to determine whether the means of three or more groups are significantly different from one another. If you need to know whether customer satisfaction scores vary meaningfully across four product lines, or whether training methods produce different performance outcomes across multiple teams, ANOVA gives you a statistically rigorous answer.

The Paradox: Using Variance to Compare Means

Here’s where things get conceptually interesting. Despite the name suggesting a focus on differences between averages, ANOVA actually works by analyzing variance — the spread of data points — to draw conclusions about group means. That might sound counterintuitive at first.

The logic is elegant, though. ANOVA compares two sources of variability: how much the group means differ from the overall average, and how much individual data points vary within each group. When the variation between groups is substantially larger than the variation within groups, that’s a strong signal the group means are genuinely different — not just products of random noise.

According to research published in PMC, this ratio-based approach is what makes ANOVA particularly powerful for detecting real differences across multiple groups simultaneously.

The Omnibus Test: One Question, Maximum Efficiency

ANOVA functions as an omnibus test — a single procedure that evaluates all group comparisons at once. Rather than running separate t-tests for every possible pair of groups (which rapidly multiplies the risk of false positives), ANOVA asks one broad question: Do any of these groups differ?

For a one-way ANOVA, that question centers on a single independent variable. This efficiency is critical in data science workflows where speed and statistical integrity both matter.

Understanding what ANOVA is testing, however, only takes you so far. To truly harness it, you need to understand the mechanics driving its conclusions — specifically, the F-statistic and what it actually measures.

The Mechanics of ANOVA: Understanding the F-Statistic

Knowing what Analysis of Variance does is one thing — understanding how it does it is where data scientists gain a real edge. The engine running under the hood is the F-statistic, and once you understand its logic, interpreting ANOVA output becomes intuitive rather than intimidating.

Between-Group vs. Within-Group Variance

ANOVA works by splitting total data variability into two distinct sources:

- Between-group variance — how much the group means differ from the overall mean

- Within-group variance — how much individual data points scatter around their own group mean

The F-statistic is simply the ratio of these two values: between-group variance divided by within-group variance. This elegant division is what makes ANOVA so powerful. A high F-ratio signals that the differences between groups are large relative to the natural noise inside each group — a strong indicator that something meaningful is happening.

What the F-Ratio Actually Tells You

In practice, a high F-value suggests the group means are genuinely different, not the result of random variation. A low F-value — close to 1.0 — tells a different story: the variance between groups is barely distinguishable from the variance within them. As Qualtrics explains, the F-statistic essentially asks whether your treatment groups are more different from each other than you’d expect by chance.

The p-Value: Crossing the Significance Threshold

The F-statistic alone doesn’t close the case. That’s where the p-value steps in. If the p-value falls below the conventional threshold of 0.05, the result is deemed statistically significant — meaning there’s less than a 5% probability the observed differences occurred randomly. A smaller p-value strengthens confidence in the finding, though it’s worth noting that statistical significance doesn’t automatically equal practical importance.

With the mechanics clear, the next logical question is: which version of ANOVA fits your specific data scenario? That depends heavily on how many variables you’re working with.

Types of ANOVA: From One-Way to Factorial Designs

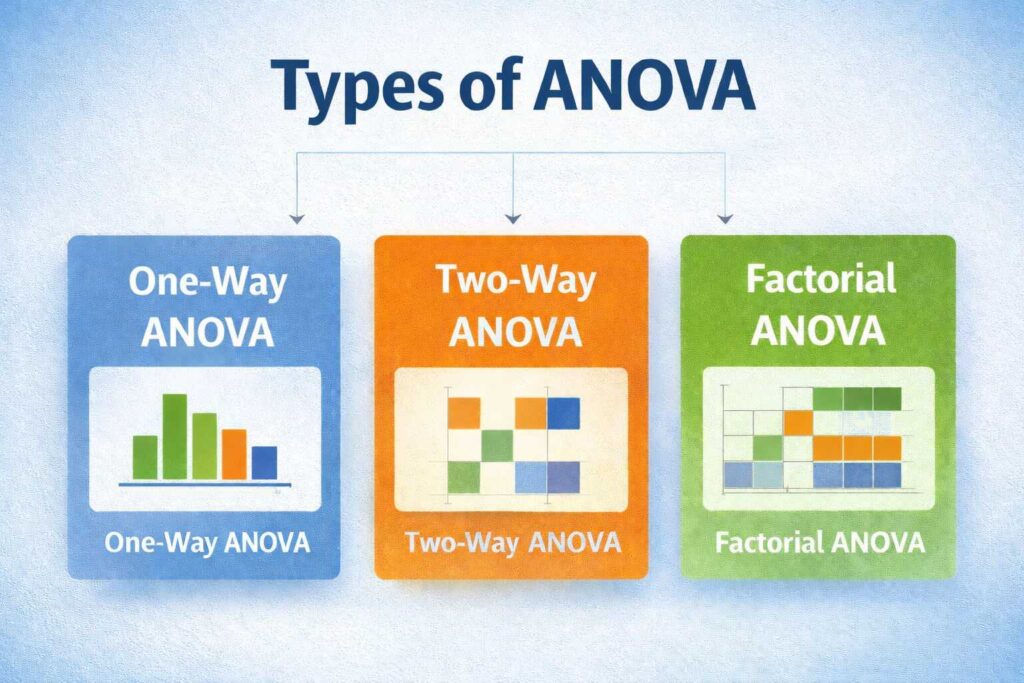

Understanding the F-statistic is just the beginning. The real power of ANOVA emerges when you match the right test design to your specific research question. Fortunately, ANOVA isn’t a one-size-fits-all tool — it comes in several configurations, each built for a different level of complexity.

One-Way ANOVA: One Variable, Multiple Groups

One-way ANOVA is the most straightforward version and the best starting point for anyone new to the method. It tests whether statistically significant differences exist between the means of three or more groups based on a single independent variable.

Example scenario: A data scientist wants to know whether customer satisfaction scores differ across three product lines. There’s one factor (product line) and multiple groups — that’s a textbook one-way ANOVA setup. As InMoment explains, one-way ANOVA is particularly valuable when comparing group means where only one categorical factor is in play.

The limitation here is obvious: real-world data rarely operates in isolation. One variable seldom tells the whole story.

Two-Way ANOVA: Introducing Interaction Effects

This is where the analysis gets notably more powerful. Two-way ANOVA examines the effect of two independent variables on a single outcome — and, critically, whether those two variables interact with each other.

Interaction effects are the real insight generator in two-way ANOVA. They reveal whether the impact of one variable depends on the level of another — something one-way ANOVA simply cannot detect.

Example scenario: A retailer analyzes sales performance by both region and season simultaneously. Two-way ANOVA would not only measure each factor’s individual effect but also whether seasonal trends differ by region.

Factorial ANOVA: Scaling Up Complexity

When a study involves three or more independent variables, factorial ANOVA steps in. It follows the same core logic but scales it to handle multiple factors and their interactions at once.

In practice, factorial designs are common in machine learning experiments and A/B testing frameworks where several conditions are being manipulated simultaneously. However, with added complexity comes a steeper interpretability challenge — the number of possible interactions grows quickly.

Each ANOVA type serves a distinct analytical purpose, and choosing the right one directly affects the quality of your insights. That same principle of informed variable selection carries into how ANOVA functions within broader machine learning pipelines — which is exactly where we’re headed next.

ANOVA in the Machine Learning Pipeline: Feature Selection

Choosing the right design for your ANOVA test is only half the battle. Once you understand what the data is telling you, there’s another high-value application that data scientists lean on heavily: using ANOVA as a feature selection engine before model training even begins.

Identifying What Actually Moves the Needle

In a typical machine learning dataset, not every feature deserves a seat at the table. ANOVA helps cut through the noise by quantifying which categorical features have a statistically meaningful relationship with the target variable. The F-statistic is the workhorse here — a high F-statistic signals that a feature drives real variance in the outcome, while a low one suggests it’s contributing little more than statistical clutter.

Feature selection powered by ANOVA separates the variables that genuinely predict outcomes from those that merely occupy memory and processing time.

Example scenario: A data team building a customer churn model might test whether geographic region, subscription tier, and device type each produce significant group differences in churn rate. ANOVA flags which variables clear the significance threshold — those are the ones worth feeding into the model.

Reducing Dimensionality Without Losing Signal

Irrelevant features don’t just slow down training — they actively degrade model performance by introducing noise. By applying ANOVA-based filtering early in the pipeline, teams can reduce dimensionality efficiently without relying solely on computationally expensive methods like PCA or recursive feature elimination.

In practice, statistical filtering at this stage can meaningfully reduce training time and resource costs, particularly when working with large datasets containing dozens of categorical variables.

Cleaner Inputs, Sharper Predictions

Removing irrelevant features produces models that generalize better, overfit less, and are far easier to interpret. ANOVA doesn’t replace domain expertise — it sharpens it, giving data scientists a statistically grounded reason to include or exclude a variable rather than relying on intuition alone.

Of course, ANOVA’s effectiveness as a feature selection tool depends on whether the underlying data meets certain structural requirements — which is exactly what the next section examines in detail.

The 4 Pillars: Essential Assumptions for Valid ANOVA Testing

Applying ANOVA in data science without checking your assumptions is like building on a cracked foundation — the structure might look solid until it collapses under pressure. Before you trust any F-statistic or p-value, four core conditions must hold.

1. Independence of Observations

This is the non-negotiable baseline. Each data point must be collected independently, meaning one measurement cannot influence another. In practice, this is achieved through careful study design — participants assigned to one group should have no effect on those in another. Violating this assumption distorts every downstream calculation.

2. Normality

ANOVA assumes that the residuals within each group follow a roughly normal distribution. Fortunately, the test is relatively robust against moderate violations, especially with larger sample sizes (a property known as the Central Limit Theorem at work). Tools like Q-Q plots or the Shapiro-Wilk test can help you verify this before proceeding.

3. Homogeneity of Variance (Homoscedasticity)

Each group’s variance should be approximately equal. When variances differ dramatically across groups, the F-statistic becomes unreliable. Levene’s test is a standard diagnostic check for this condition, and Qualtrics explains that violations can often be addressed through data transformations.

4. Random Sampling

Without random sampling, your results can’t generalize beyond your immediate dataset. Valid generalization requires that every member of the population had a fair chance of being included — this is what separates actionable insights from anecdotal patterns.

Checking these four pillars takes minutes but saves countless hours of misinterpretation. With a valid test structure confirmed, the real payoff becomes clear — and nowhere is that more visible than across the diverse industries that rely on ANOVA daily.

Real-World Examples: ANOVA Across Diverse Industries

ANOVA isn’t a purely academic tool confined to research papers — it’s actively solving high-stakes problems across industries every day. Understanding where it applies helps clarify why it belongs in every data scientist’s toolkit.

Healthcare: Evaluating Drug Dosages

In clinical settings, researchers routinely use ANOVA to compare the effectiveness of multiple drug dosages simultaneously. Rather than running separate t-tests for each dosage pair, a single ANOVA run determines whether any meaningful difference exists across all groups at once. This approach reduces error risk and accelerates decision-making in trials where time directly affects patient outcomes.

Manufacturing: Benchmarking Supplier Materials

Quality control teams use ANOVA to compare material durability from different suppliers. Example scenario: a manufacturer sources steel components from four vendors and wants to know whether fatigue resistance varies significantly between them. ANOVA surfaces those differences quickly, informing procurement decisions before a flawed batch reaches the production line.

Marketing: Measuring Ad Performance Across Regions

Marketing analysts leverage ANOVA to evaluate how different advertisement types perform across geographic regions. By treating ad format and region as factors, teams identify which combinations drive the strongest engagement — no guessing required.

Beyond these applications, ANOVA for feature selection in machine learning pipelines ties directly back to the same statistical logic: determining which variables genuinely move the needle.

A method this versatile — spanning medicine, manufacturing, and marketing — earns its reputation as a foundational pillar of data-driven decision-making. As you build your analytical skill set, ANOVA is one technique worth mastering completely, because the insight it unlocks is consistently worth the effort.

Conclusion

ANOVA helps simplify complex data by comparing two key elements: between-group variance, which shows how much group averages differ from the overall mean, and within-group variance, which reflects how individual values vary within each group. By acting as a “signal vs. noise” detector, ANOVA highlights whether observed differences are meaningful or just random variation. A high F-ratio indicates that the differences between groups are significantly larger than the natural variation within them, suggesting real impact. In more advanced cases like two-way ANOVA, interaction effects provide deeper insights by revealing how multiple factors influence outcomes together, making ANOVA a powerful tool for data-driven decision-making.

FAQ’s

What is ANOVA and why is it used?

ANOVA (Analysis of Variance) is a statistical method used to compare the means of three or more groups. It helps determine whether the differences observed are statistically significant or just due to random variation. It’s widely used in research, business analytics, and data science.

Why use ANOVA instead of T-test?

A T-test is limited to comparing the means of only two groups, while ANOVA can handle three or more groups at once. Using multiple T-tests increases the risk of errors, whereas ANOVA controls this and provides a more reliable overall comparison.

What is the basic principle of ANOVA?

ANOVA works by comparing between-group variance (differences among group means) with within-group variance (variation within each group). If the between-group variance is significantly higher, it indicates that at least one group differs meaningfully from the others.

What are ANOVA types?

The main types of ANOVA include One-Way ANOVA (one independent variable), Two-Way ANOVA (two independent variables), and MANOVA (multiple dependent variables). Each type is used based on the complexity and structure of the data being analyzed.