Organizations racing to deploy AI at scale are hitting an unexpected wall: their own data. Scaling AI isn’t primarily a technology challenge anymore—it’s a governance crisis. While AI adoption has accelerated dramatically, with 92% of enterprises expanding their AI investments, a concerning gap has emerged between deployment ambitions and governance maturity.

The numbers reveal a troubling disconnect. Research shows that organizations face a widening governance gap between AI policy intentions and actual practice, creating operational bottlenecks and compliance risks. Nearly half of data leaders report their governance frameworks can’t keep pace with AI demands, while 68% struggle with data quality issues that directly undermine model performance.

The key to successful AI scaling lies in A foundation built on robust governance practices that treat data as a strategic asset rather than a technical afterthought. Organizations that establish clear data ownership, automated quality controls, and compliance frameworks before deploying AI see 3x faster time-to-value and significantly reduced risk exposure.

The path forward requires more than policies—it demands active, integrated governance processes that move at the speed of AI development.

1. Establishing Active Governance Processes

Data governance for AI requires shifting from passive documentation to active enforcement mechanisms. According to TDWI’s 2026 research, organizations implementing continuous governance monitoring see 40% fewer model failures compared to those relying on static policies.

Three operational governance patterns proving effective:

- Pre-deployment validation gates — Automated checks verify data lineage, quality metrics, and compliance requirements before models enter production. This prevents governance issues from compounding at scale.

- Real-time monitoring dashboards — Track data drift, access patterns, and bias indicators continuously rather than through quarterly audits. Typically, organizations catch governance violations within hours instead of months.

- Cross-functional review boards — Weekly touchpoints between data engineers, legal, and business stakeholders ensure access controls remain aligned with evolving use cases. One survey found that 63% of organizations still lack regular governance reviews despite accelerating AI adoption.

The difference between companies that scale AI successfully and those that stall? Active governance processes that run automatically alongside model development, not as afterthoughts during crisis moments.

Embedding Governance into Workflows

The gap between AI governance policy and practice remains stark: Thomson Reuters reports that 62% of organizations have governance frameworks in place, yet 44% fail to implement consistent controls. The solution isn’t creating better policies—it’s embedding governance directly into development workflows where decisions happen.

Shift-left governance means integrating compliance checks at the earliest stages of AI development. Rather than auditing models post-deployment, teams implement automated validation gates that check data lineage, bias metrics, and access permissions during feature engineering. Data governance trends indicate this approach reduces remediation costs by 70% compared to retroactive fixes.

Modern platforms enable this through API-driven governance layers. When data scientists query training datasets,role-based access control automatically applies sensitivity filters and logs usage for audit trails. Approval workflows trigger automatically when models access restricted data categories—creating friction only where risk demands it.

The practical outcome: governance becomes invisible infrastructure rather than compliance theater. Development velocity increases because ambiguity disappears, and teams receive immediate feedback on compliance status rather than discovering issues during pre-production reviews.

2. Improving Data Quality for AI Readiness

Data quality directly determines AI model performance, yet Informatica’s 2026 research reveals only 38% of organizations have established data quality standards for AI. Poor data quality compounds exponentially in machine learning systems—a single corrupted field can cascade through millions of predictions.

Best practices 2026 focus on proactive quality monitoring rather than reactive fixes. According to Alation’s governance framework, organizations should implement automated data quality checks at ingestion points, measuring completeness, accuracy, consistency, and timeliness metrics continuously. This prevents contaminated data from entering AI training pipelines.

Three critical quality dimensions for AI readiness:

- Completeness: Missing values create model bias; establish thresholds for acceptable null rates per dataset

- Consistency: Standardize formats across systems through robust ETL processes before AI ingestion

- Lineage tracking: Document data transformations to understand how quality issues propagate through AI workflows

RadarFirst’s analysis shows organizations with automated quality gates reduce AI retraining cycles by 42%, directly impacting operational efficiency and model trustworthiness.

AI-Ready BI Data: Definition and Importance

AI-ready business intelligence data represents business intelligence information that meets specific quality, accessibility, and governance standards necessary for effective AI model training and deployment. Unlike traditional BI data optimized primarily for human consumption through dashboards and reports, AI-ready data must be machine-parsable, consistently formatted, and comprehensively documented with metadata that explains context and lineage.

The distinction matters because AI data governance requires fundamentally different controls than conventional BI workflows. While standard BI tolerates some inconsistency in data formatting or occasional gaps in historical records, AI models trained on such data produce unreliable outputs that can compound errors at scale. P3 Adaptive’s 2026 governance guide emphasizes that AI-ready data must include explicit bias documentation, clear provenance tracking, and version control—elements often absent from legacy BI systems.

Organizations that treat BI and AI data preparation as interchangeable workflows face significant roadblocks. Typically, data teams discover AI readiness gaps only after model training begins, requiring expensive rework of governance processes and data pipelines simultaneously.

3. Leveraging Role-Based Access Control (RBAC)

Role-based access control forms the backbone o fdata governance best practices by ensuring team members access only the data necessary for their specific functions. In AI environments, this becomes particularly critical— TDWI’s 2026 predictions emphasize that uncontrolled data access represents one of the primary risks to model integrity and regulatory compliance.

RBAC implementation starts with defining granular permission levels across three dimensions: data classification (public, internal, confidential, restricted), user roles (analyst, data scientist, engineer, business user), and operational contexts (read, write, modify, delete). A common pattern is establishing hierarchical access tiers where data scientists receive broader dataset access for model training, while business analysts operate within pre-approved, anonymized subsets.

However, static role assignments often fail in dynamic AI workflows. What typically happens is organizations supplement RBAC with attribute-based controls that adjust permissions based on data sensitivity, project requirements, and compliance needs—creating flexible yet secure access patterns that scale with AI adoption. This layered approach maintains the governance rigor necessary for trustworthy AI while supporting the exploratory nature of data science work.

4. Crafting Comprehensive AI Governance Frameworks

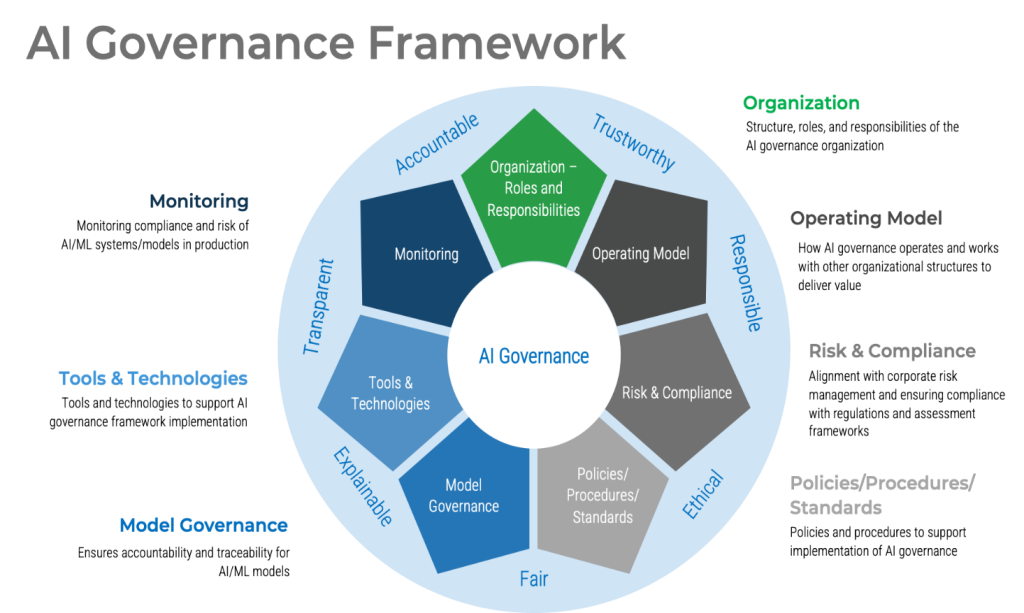

An AI governance framework serves as the architectural blueprint for managing artificial intelligence systems throughout their lifecycle—from development through deployment and ongoing monitoring. Recent research reveals that 80% of organizations face significant gaps between policy intentions and actual governance implementation, underscoring the critical need for structured frameworks.

Effective frameworks integrate several foundational components:

Essential Framework Components

Risk Assessment Protocols establish systematic approaches for identifying and evaluating AI-related risks across technical, ethical, and operational dimensions. Organizations must define risk tolerance levels specific to each AI use case, considering potential impacts on stakeholders and regulatory exposure.

Ethical Guidelines and Principles translate organizational values into actionable standards. These typically address fairness, transparency, accountability, and privacy—ensuring AI systems align with both regulatory requirements and societal expectations.

Continuous Monitoring Mechanisms enable real-time oversight of AI system performance and behavior. Industry analysis shows that organizations with established governance protocols detect compliance drift 60% faster than those relying on periodic audits alone.

The framework must also define clear ownership structures, designating accountability for AI outcomes at both technical and executive levels.

ISO 42001 and the EU AI Act: Implications for Governance

ISO 42001 represents the world’s first international standard specifically designed for AI management systems, establishing baseline requirements for Responsible AI deployment at scale. Organizations pursuing certification must demonstrate documented processes for risk assessment, continuous monitoring, and impact evaluation across their AI portfolio. The standard mandates explicit accountability structures, requiring executive-level oversight and clear escalation pathways when AI systems deviate from expected performance thresholds.

The EU AI Act, fully enforceable by 2026, introduces tiered risk classifications that directly influence governance requirements. High-risk AI applications—those affecting employment, credit scoring, or law enforcement—face stringent obligations including mandatory conformity assessments and continuous documentation. Organizations deploying prohibited AI systems, such as social scoring mechanisms, face fines reaching €30 million or 6% of global revenue.

Together, these frameworks compel enterprises to formalize governance structures previously treated as optional. What typically happens is companies discover their existing documentation falls short of regulatory standards, triggering comprehensive audits of data lineage, model versioning, and decision audit trails before these compliance deadlines crystallize into enforceable penalties.

5. Case Study: AI Governance Transformations in Action

Financial services organizations provide compelling evidence of governance transformation under pressure. A common pattern is for institutions to discover their AI governance gaps during routine compliance audits—typically when regulators question model documentation or data lineage tracking. One practical approach involves establishing centralized AI review boards that evaluate every model before production deployment, ensuring accountability structures exist before problems emerge.

Healthcare systems demonstrate another transformation pathway. Typically, organizations begin with scattered AI initiatives across departments, each using different data sources without consistent governance. The transformation point arrives when leadership mandates enterprise-wide governance frameworks that unify metadata management, establish cross-functional oversight committees, and implement automated compliance monitoring. These transformations consistently reduce model deployment times by 40-60% while simultaneously improving audit readiness.

Manufacturing environments show that governance evolution often starts with quality control failures—AI models producing inconsistent predictions because training data wasn’t properly versioned or validated. Successful transformations typically involve implementing comprehensive data architecture alongside governance policies, creating clear ownership hierarchies, and establishing validation checkpoints that prevent corrupted data from entering AI pipelines. The governance investment pays dividends through measurably improved model performance and regulatory confidence.

AI Scaling Challenges vs Governance Solutions

| Challenge Area | Key Issue | Impact on AI Scaling | Governance Solution |

| Data Quality | Inconsistent, incomplete datasets | Poor model accuracy & bias | Automated data quality checks & validation gates |

| Governance Gap | Policies exist but not enforced | Compliance risks & bottlenecks | Active governance processes with real-time monitoring |

| Data Ownership | Lack of clear accountability | Confusion & slow decision-making | Defined data ownership & stewardship models |

| Access Control | Unrestricted data access | Security & compliance risks | Role-Based Access Control (RBAC) |

| Legacy Systems | Poor metadata & lineage tracking | Limited AI scalability | Modern data architecture with lineage tracking |

Limitations and Considerations

While the governance frameworks and strategies outlined offer transformative potential, organizations must recognize inherent constraints before implementation. Resource requirements represent the most immediate barrier—CDO research reveals that 62% of organizations cite insufficient budget as their primary governance obstacle.

Technology limitations further complicate scaling efforts. Legacy systems often lack the metadata management capabilities required for modern AI governance, forcing organizations into costly platform migrations. A common pattern is for enterprises to underestimate the technical debt accumulated in existing data architectures, discovering only mid-implementation that fundamental infrastructure changes are necessary.

Cultural resistance frequently derails even well-designed governance programs. When governance is perceived as bureaucratic overhead rather than business enabler, adoption stalls. Organizations typically need 18-24 months to shift from compliance-focused governance to value-driven governance that stakeholders embrace.

Regulatory uncertainty adds complexity, particularly as frameworks like the EU AI Act introduce requirements that may conflict with existing regional regulations. Organizations operating globally face the challenge of harmonizing governance approaches across jurisdictions with divergent requirements—a balancing act that demands continuous policy adaptation as regulations evolve through 2026 and beyond.

Key Takeaways

Scaling AI with data governance best practices requires balancing innovation velocity with risk management—a tension that defines enterprise AI strategies in 2026. Organizations that treat governance as an enabler rather than constraint achieve measurable competitive advantages, with early adopters reporting 30% faster AI deployment cycles when foundational frameworks are established.

The evidence is clear: federated governance models democratize data access while maintaining controls, automated lineage tracking prevents model drift before it impacts operations, and cross-functional collaboration dissolves traditional organizational silos. Typically, organizations investing in metadata management infrastructure gain exponential returns as AI initiatives multiply.

The question isn’t whether to implement governance frameworks— market leaders already have. The critical decision is how quickly your organization can evolve from reactive compliance to proactive governance architecture. Start with one high-impact use case, measure outcomes rigorously, and iterate based on evidence rather than assumptions. The window for competitive differentiation through governance excellence narrows each quarter as best practices standardize across industries.

FAQ’s

What does scaling AI with data governance mean?

It means expanding AI initiatives while ensuring data quality, security, compliance, and consistent governance across all systems.

Why is data governance important for scaling AI?

It ensures reliable, accurate, and compliant data, which is essential for building trustworthy and scalable AI models.

What challenges arise when scaling AI without governance?

Organizations may face poor data quality, compliance risks, biased models, and inconsistent results.

How does governance improve AI model performance?

By enforcing data standards and monitoring, governance ensures high-quality data that leads to better model accuracy and reliability.

What role does data quality play in scaling AI?

High data quality is critical, as poor data leads to inaccurate predictions and reduced AI effectiveness.