Artificial Intelligence has transformed how machines understand and generate human language. From chatbots to search engines, modern systems rely on complex models to process text data efficiently.

One of the most important metrics used to evaluate these models is perplexity.

Understanding perplexity helps data scientists and machine learning engineers assess how well a language model predicts text and improves overall performance.

What is Perplexity?

Perplexity is a measurement used in natural language processing to evaluate how well a probability model predicts a sample.

In simple terms:

Perplexity measures how “confused” a model is when predicting the next word.

Key idea:

- Lower perplexity = better model

- Higher perplexity = poor predictions

Why Perplexity Matters in AI

Perplexity plays a critical role in evaluating language models.

Importance:

- Measures prediction accuracy indirectly

- Helps compare different models

- Guides model optimization

Applications:

- Chatbots

- Text generation

- Machine translation

- Speech recognition

Mathematical Understanding of Perplexity

Perplexity is calculated using probability distributions.

Formula:

Perplexity = 2^(-log probability)

Or more commonly:

Perplexity = exp(-1/N * sum of log probabilities)

Explanation:

- N = number of words

- Log probability = likelihood of predictions

Perplexity in Language Models

Language models use perplexity to evaluate performance.

Example:

If a model predicts the next word in a sentence:

“The cat is ___”

- Good prediction: “sleeping”

- Poor prediction: random word

Lower perplexity indicates better prediction capability.

Real-Time Examples of Perplexity

Example 1: Chatbots

A chatbot with low perplexity generates meaningful responses.

Example 2: Text Autocomplete

Search engines use perplexity to improve suggestions.

Example 3: Machine Translation

Lower perplexity leads to more accurate translations.

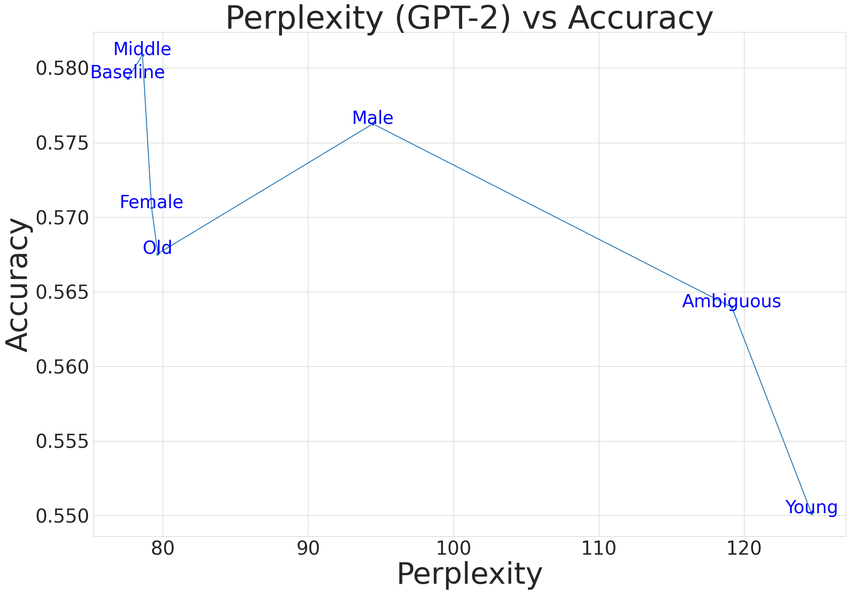

Perplexity vs Accuracy

Perplexity:

- Measures probability

- Focuses on uncertainty

Accuracy:

- Measures correctness

- Binary evaluation

Key Difference:

Perplexity evaluates model confidence, not just correctness.

Perplexity in NLP Applications

Applications include:

- Language modeling

- Text summarization

- Speech recognition

- Predictive typing

Factors Affecting Perplexity

Key factors:

- Data quality

- Model architecture

- Training size

- Vocabulary complexity

Cross-Entropy and Its Relationship with Perplexity

Perplexity is directly derived from cross-entropy, a key concept in machine learning.

What is Cross-Entropy?

Cross-entropy measures the difference between the predicted probability distribution and the actual distribution.

Relationship:

- Lower cross-entropy → Lower perplexity

- Higher cross-entropy → Higher perplexity

Formula Connection:

Perplexity = exp(cross-entropy)

Why This Matters:

Understanding cross-entropy helps interpret perplexity more effectively in model evaluation.

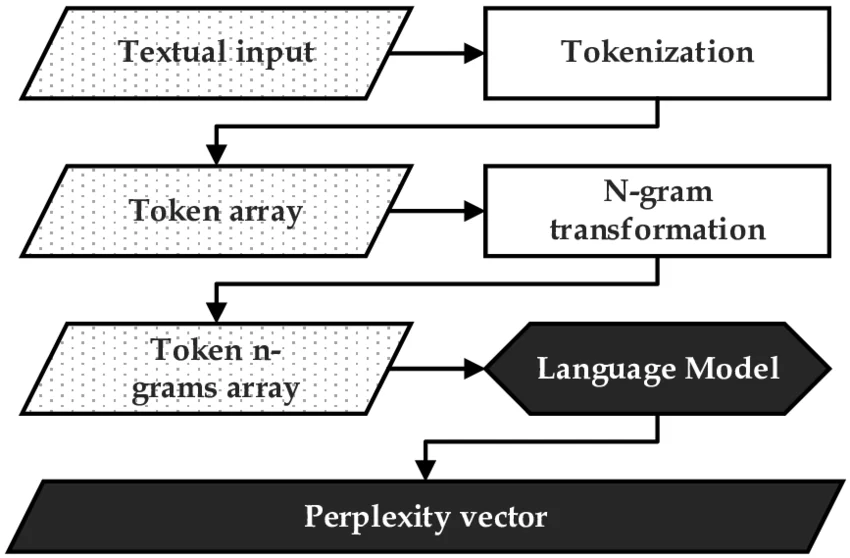

Perplexity in N-Gram Models

Before modern deep learning models, n-gram models were widely used.

How It Works:

- Predict next word based on previous words

- Example: bigram (2 words), trigram (3 words)

Role of Perplexity:

- Measures how well n-gram models predict sequences

- Helps compare different n-gram configurations

Perplexity in Transformer Models

Modern AI models like transformers rely heavily on perplexity.

Key Features:

- Context-aware predictions

- Attention mechanisms

Why Perplexity is Important:

- Evaluates large language models

- Tracks training progress

Perplexity During Training vs Evaluation

Perplexity behaves differently during model development.

Training Phase:

- Gradually decreases

- Indicates learning progress

Validation Phase:

- Helps detect overfitting

Key Insight:

If training perplexity decreases but validation perplexity increases, the model is overfitting.

Perplexity and Tokenization

Tokenization affects perplexity significantly.

Types:

- Word-level tokens

- Subword tokens

- Character-level tokens

Impact:

- Smaller tokens → Lower perplexity

- Larger tokens → Higher perplexity

Perplexity in Multilingual Models

Perplexity varies across languages.

Challenges:

- Different grammar structures

- Vocabulary size

- Data availability

Example:

A model trained on English may show higher perplexity on less-resourced languages.

Benchmarking Models Using Perplexity

Perplexity is widely used in benchmarking.

Example:

Comparing two models:

- Model A: Perplexity = 20

- Model B: Perplexity = 15

Model B performs better.

Perplexity in Generative AI Systems

Generative AI models depend on perplexity for optimization.

Applications:

- Text generation

- Code generation

- Content creation

Insight:

Lower perplexity improves coherence and fluency.

Perplexity vs BLEU Score

Another evaluation metric in NLP.

Perplexity:

- Measures probability

- Model-based

BLEU Score:

- Measures similarity to reference text

- Output-based

Use Together:

Combining both gives better evaluation.

Perplexity in Speech Recognition

Speech systems convert audio into text.

Role:

- Evaluates language model predictions

- Improves transcription accuracy

Impact of Dataset Size on Perplexity

Observation:

- Larger datasets → Lower perplexity

- Smaller datasets → Higher perplexity

Reason:

More data improves learning and prediction accuracy.

Perplexity and Vocabulary Size Trade-Off

Trade-Off:

- Large vocabulary → Higher complexity

- Small vocabulary → Simpler model

Balance:

Choose optimal vocabulary size to minimize perplexity.

Fine-Tuning Models to Reduce Perplexity

Fine-tuning improves model performance.

Steps:

- Use domain-specific data

- Adjust hyperparameters

- Train for additional epochs

Perplexity Monitoring in Production Systems

In real-world systems, monitoring is essential.

Why Monitor:

- Detect model degradation

- Maintain performance

Tools:

- Logging systems

- Monitoring dashboards

Perplexity in Conversational AI

Chatbots and assistants rely on perplexity.

Role:

- Ensures meaningful responses

- Improves conversation quality

Perplexity and Context Length

Context length affects predictions.

Insight:

- Longer context → Better predictions

- Short context → Higher perplexity

Perplexity in Code Generation Models

AI models generate code using NLP techniques.

Example:

- Auto-completion tools

- Coding assistants

Lower perplexity leads to more accurate code suggestions.

Hyperparameter Tuning for Perplexity Optimization

Key parameters:

- Learning rate

- Batch size

- Model depth

Proper tuning reduces perplexity.

Perplexity in Real-Time Applications

Examples:

- Chatbots

- Recommendation systems

- Virtual assistants

Real-time systems require low perplexity for better performance.

Combining Perplexity with Other Metrics

For better evaluation:

- Accuracy

- Precision

- Recall

- F1 Score

Perplexity Visualization Techniques

Visualizing helps understanding.

Methods:

- Training curves

- Graphs

- Dashboards

Common Misinterpretations of Perplexity

Mistakes:

- Assuming lower perplexity always means better output

- Ignoring context and semantics

Practical Implementation Example

Python Example:

import torch

import math

loss = 2.5

perplexity = math.exp(loss)

print(perplexity)

Industry Use Cases of Perplexity

Industries:

- Technology

- Healthcare

- Finance

- E-commerce

Perplexity in AI Research

Researchers use perplexity for:

- Model comparison

- Algorithm evaluation

- Performance benchmarking

Advanced Optimization Techniques

Techniques:

- Transfer learning

- Regularization

- Dropout

Ethical Considerations in Model Evaluation

Evaluation metrics impact decisions.

Considerations:

- Bias detection

- Fair evaluation

- Transparency

Scaling Models and Perplexity

Larger models often achieve lower perplexity.

Trade-Off:

- Better performance

- Higher computational cost

Here is ultra-advanced, expert-level additional content you can append to your Perplexity blog to make it truly research-grade, enterprise-level, and highly authoritative. This goes beyond standard explanations into modern LLM evaluation, scaling laws, optimization strategies, and real-world deployment insights.

Perplexity and Information Theory Foundations

Perplexity is deeply rooted in information theory.

Core Concept:

Perplexity measures the uncertainty of a probability distribution.

It is directly linked to:

- Entropy

- Cross-entropy

- Information gain

Interpretation:

- Lower perplexity = less uncertainty

- Higher perplexity = more randomness

Insight:

Perplexity can be seen as the effective branching factor of a model when predicting the next token.

Scaling Laws and Perplexity in Large Language Models

Modern AI research shows that perplexity follows predictable scaling laws.

Key Insight:

As model size, data, and compute increase:

- Perplexity decreases systematically

- Performance improves

Scaling Law Equation:

Loss ∝ (Model Size)^-α

Practical Meaning:

- Bigger models + more data = better predictions

- But with diminishing returns

Perplexity and Zero-Shot / Few-Shot Learning

Large models perform tasks without explicit training.

Observation:

- Lower perplexity often correlates with better zero-shot performance

Example:

A model with lower perplexity can:

- Answer questions

- Translate languages

- Summarize text

Without task-specific training.

Calibration of Language Models Using Perplexity

Perplexity helps evaluate model calibration.

Calibration Meaning:

How well predicted probabilities match actual outcomes.

Issue:

Models may have:

- Low perplexity

- But poor calibration

Solution:

- Temperature scaling

- Probability normalization

Perplexity in Reinforcement Learning from Human Feedback (RLHF)

Modern AI systems use RLHF for alignment.

Role of Perplexity:

- Initial training uses perplexity minimization

- RLHF adjusts behavior beyond perplexity

Key Insight:

Perplexity alone cannot capture:

- Helpfulness

- Safety

- Alignment

Domain-Specific Perplexity Optimization

Perplexity varies across domains.

Examples:

- Medical text → specialized vocabulary

- Legal documents → complex structure

- Financial reports → numerical context

Strategy:

Fine-tune models on domain-specific data to reduce perplexity.

Perplexity Drift in Production Systems

Models degrade over time.

Causes:

- Changing data patterns

- New vocabulary

- User behavior shifts

Solution:

- Continuous monitoring

- Periodic retraining

Token-Level vs Sequence-Level Perplexity

Token-Level:

- Measures prediction per word/token

Sequence-Level:

- Measures entire sentence probability

Insight:

Both provide different perspectives on model performance.

Perplexity in Autoregressive vs Masked Models

Different architectures use perplexity differently.

Autoregressive Models:

- Predict next token

- Use perplexity directly

Masked Models:

- Predict missing tokens

- Use modified evaluation metrics

Perplexity and Compression Theory

Perplexity is related to data compression.

Key Idea:

Better language models compress data more efficiently.

Interpretation:

- Lower perplexity = better compression

- Model captures patterns effectively

Sparse vs Dense Models and Perplexity

Modern AI uses different architectures.

Dense Models:

- All parameters active

- Lower perplexity

Sparse Models:

- Selectively activated

- Efficient but complex

Perplexity in Retrieval-Augmented Generation (RAG)

RAG combines retrieval and generation.

Impact on Perplexity:

- External knowledge reduces uncertainty

- Improves predictions

Result:

Lower perplexity with better factual accuracy.

Perplexity in Multimodal AI Systems

AI now processes text, images, and audio.

Challenge:

Perplexity is primarily text-based.

Adaptation:

- Multimodal evaluation metrics

- Hybrid approaches

Perplexity and Long-Context Models

Modern models process long documents.

Observation:

- Longer context reduces perplexity

- Better coherence

Challenge:

- Computational cost increases

Perplexity Optimization Using Curriculum Learning

Curriculum learning improves training.

Approach:

- Start with simple data

- Gradually increase complexity

Result:

- Faster convergence

- Lower perplexity

Gradient-Based Optimization for Perplexity Reduction

Training minimizes loss related to perplexity.

Techniques:

- Gradient descent

- Adam optimizer

- Learning rate scheduling

Perplexity in Edge AI and Lightweight Models

Edge devices require efficient models.

Trade-Off:

- Slightly higher perplexity

- Lower computational cost

Perplexity in Knowledge Distillation

Large models transfer knowledge to smaller ones.

Goal:

- Reduce model size

- Maintain low perplexity

Perplexity in Streaming Data Systems

Real-time systems process continuous data.

Challenge:

- Data evolves constantly

Solution:

- Online learning

- Adaptive models

Perplexity and Explainability

Perplexity does not explain decisions.

Limitation:

- Black-box metric

Solution:

Combine with:

- Attention visualization

- Feature importance

Adversarial Attacks and Perplexity

Models can be manipulated.

Observation:

Adversarial inputs increase perplexity.

Use Case:

- Detect malicious inputs

Perplexity in Synthetic Data Generation

AI generates training data.

Insight:

- Synthetic data quality affects perplexity

- Poor data increases uncertainty

Perplexity Thresholds in Production Systems

Organizations define thresholds.

Example:

- Acceptable perplexity range

- Alerts when exceeded

Perplexity in A/B Testing of Models

Compare multiple models.

Process:

- Deploy models

- Measure perplexity

- Select best performer

Hardware Acceleration and Perplexity Optimization

Modern AI uses:

- GPUs

- TPUs

Benefit:

- Faster training

- Lower perplexity achieved efficiently

Distributed Training and Perplexity

Large models train across multiple systems.

Advantage:

- Handle massive datasets

- Improve performance

Perplexity and Energy Efficiency

AI training consumes energy.

Insight:

- Lower perplexity often requires more compute

- Trade-off between efficiency and performance

Real-World Enterprise Case Study (Conceptual)

Scenario:

An e-commerce company improves search recommendations.

Approach:

- Train language model

- Reduce perplexity

Result:

- Better product suggestions

- Increased conversions

Combining Perplexity with Human Evaluation

Human feedback remains essential.

Why:

- Perplexity cannot capture meaning fully

Approach:

- Hybrid evaluation systems

Research Trends in Perplexity Optimization

Current Trends:

- Efficient transformers

- Retrieval-based models

- Hybrid evaluation metrics

Future Alternatives to Perplexity

New metrics are emerging.

Examples:

- Semantic similarity metrics

- Human evaluation

Advantages of Using Perplexity

Benefits:

- Easy to compute

- Widely used metric

- Helps model comparison

Limitations of Perplexity

Drawbacks:

- Does not measure semantic meaning

- Can be misleading

- Depends on dataset

How to Improve Perplexity

Strategies:

- Increase training data

- Optimize model parameters

- Use better architectures

- Clean dataset

Tools and Libraries for Measuring Perplexity

Popular tools:

- Python NLP libraries

- TensorFlow

- PyTorch

Perplexity in Modern AI Systems

Modern AI systems rely heavily on perplexity.

Use cases:

- Generative AI

- Conversational AI

- Large language models

Future of Perplexity

Future developments include:

- Better evaluation metrics

- Integration with AI tools

- Improved model benchmarking

Conclusion

Perplexity is a fundamental metric in natural language processing that helps evaluate how well a language model predicts text.

While it has limitations, it remains a widely used and valuable tool for improving AI systems.

Understanding perplexity enables professionals to build better models, improve predictions, and enhance overall AI performance.

FAQ’s

Is Perplexity a powerful AI?

Is Perplexity a powerful AI?

Perplexity is powered by a combination of its own models and top AI models from OpenAI, Anthropic, Google, and others, using a smart routing system to choose the best model for each query.

What models power Perplexity?

Perplexity is powered by a multi-model system combining its own Sonar models with top AI models like GPT, Claude, Gemini, and Grok, selecting the best model dynamically for each query.

What is a high Perplexity?

High perplexity refers to a situation where a language model is uncertain and less confident in its predictions, indicating lower accuracy and poorer performance in understanding or generating text.

Which AI does Elon Musk use?

Elon Musk mainly uses Grok AI by xAI, his own AI model, which powers features on X and supports his broader AI ecosystem.

How much did Jeff Bezos invest in Perplexity AI?

Elon Musk mainly uses Grok AI by xAI, his own AI model, which powers features on X and supports his broader AI ecosystem.