Artificial Intelligence can feel overwhelming at first. With so many technical terms used in tutorials, research papers, and discussions, it’s easy to get lost. One term you’ll frequently encounter is “block.”

You might hear phrases like:

- Transformer blocks

- Attention blocks

- Residual blocks

- Pipeline blocks

But what does “block” actually mean in AI?

In simple terms, a block in AI is a reusable building unit—a small module that performs a specific function and can be repeated multiple times to build complex models. Understanding this concept is key to grasping how modern AI systems are designed and scaled.

In this guide, we’ll break down:

- What a block is

- Why it matters

- The most common types of blocks in AI

- How blocks are used in real-world systems

What Does “Block” Mean in AI?

In AI—especially deep learning—a block typically refers to:

- A self-contained module made of one or more layers

- A structure with input → processing → output

- A reusable unit that can be stacked multiple times

- A standard pattern in model architecture

Simple Analogy

Think of blocks like Lego pieces.

- One Lego piece → small and simple

- Many connected pieces → complex structure

Similarly:

- One block → basic function

- Many blocks stacked → powerful AI model

Important: “Block” is not a strict scientific term—it’s a practical engineering concept used to simplify design.

Why Are Blocks Important in Modern AI?

Modern AI models are massive—sometimes containing billions of parameters. Without modular design, they would be nearly impossible to build or maintain.

Blocks solve key challenges:

Simplify architecture design

Enable scalability (stacking blocks easily)

Improve debugging and testing

Allow reuse of proven patterns

Instead of designing 100 different layers, engineers:

Create one effective block

Repeat it multiple times

That’s why you’ll hear:

- “This model has 12 blocks”

- “The network uses 24 Transformer blocks”

Blocks vs Layers: What’s the Difference?

This is a common confusion for beginners.

Layers

- Single operation

- Examples: convolution, linear, normalization

Blocks

- Group of layers combined together

- Includes connections (like skip connections)

- Acts as a reusable module

Easy Way to Remember

- Layers = Ingredients

- Blocks = Recipes

- Model = Full dish

AI Blocks and Their Functions

| Block Type | Main Purpose | Key Components | Common Use Cases |

| MLP (Feed-Forward) | Feature transformation | Linear layers, activation functions | NLP, tabular data, Transformers |

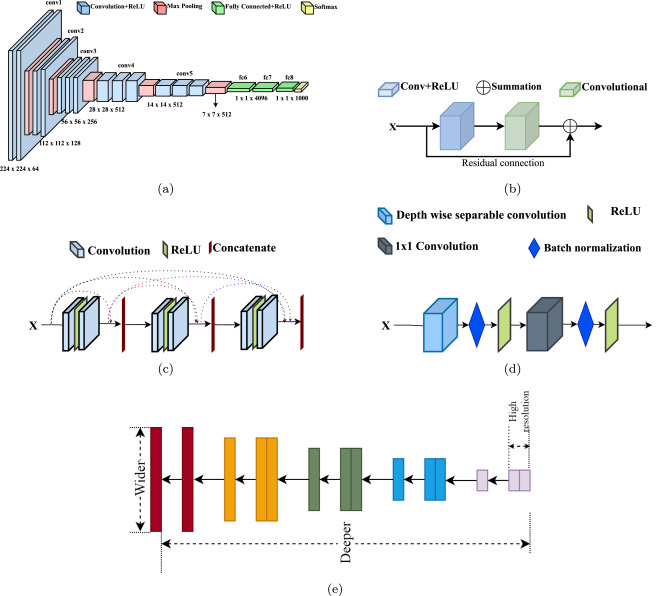

| Convolution Block | Extract visual features | Convolution, activation, pooling | Image classification, detection |

| Residual Block | Improve deep network training | Skip connections, convolution layers | Deep CNNs, ResNet models |

| Attention Block | Focus on important input parts | Self-attention mechanism | NLP, vision transformers |

| Transformer Block | Core unit of modern AI models | Attention + MLP + normalization | LLMs, chatbots, NLP systems |

| U-Net Block | Image reconstruction & generation | Downsampling, upsampling, skip links | Image segmentation, diffusion AI |

Common Types of Blocks in AI (Explained Simply)

1. Feed-Forward (MLP) Block

A basic neural network component made of:

- Linear layers

- Activation functions

Used in:

- Transformers

- General neural networks

Why it matters:

- Helps models learn complex patterns

2. Convolution Block (CNN Block)

Widely used in computer vision.

Includes:

- Convolution layers

- Activation functions

- Normalization

- Pooling (sometimes)

Used in:

- Image classification

- Object detection

- Medical imaging

Why it matters:

- Extracts visual features like edges and textures

3. Residual Block (ResNet Block)

Introduces skip connections:

- Input is added back to output

Used in:

- Deep vision models

- ResNet architectures

Why it matters:

- Solves vanishing gradient problem

- Enables very deep networks

4. Attention Block

Helps models focus on important information.

In text:

- Connects words across sentences

In images:

- Links different regions

Used in:

- Transformers

- Vision Transformers (ViT)

- Multimodal AI

Why it matters:

- One of the biggest breakthroughs in modern AI

5. Transformer Block (Most Important Today)

The core building unit of modern AI models.

Typically includes:

- Normalization

- Self-attention

- Feed-forward network (MLP)

- Residual connections

Used in:

- Large Language Models (LLMs)

- Chatbots

- NLP systems

Why it matters:

- Backbone of today’s AI revolution

6. U-Net Blocks (For Image Generation)

Common in image processing and generation.

Structure:

- Downsampling blocks

- Middle processing block

- Upsampling blocks

- Skip connections

Used in:

- Image segmentation

- Diffusion models

Why it matters:

- Enables high-quality image generation

Blocks in AI Pipelines (Beyond Neural Networks)

“Block” isn’t limited to model architecture.

In real-world AI systems, it can also mean workflow steps, such as:

- Data collection block

- Data preprocessing block

- Training block

- Evaluation block

- Deployment block

- Monitoring block

Here, “block” means a modular stage in the AI lifecycle.

How Blocks Fit into the Overall AI Architecture

To truly understand blocks, it helps to see where they sit in the big picture of an AI system.

Typical AI Model Flow:

- Input Layer → Receives raw data (text, image, audio)

- Embedding Layer → Converts data into numerical form

- Stacked Blocks → Core processing happens here

- Output Layer → Produces predictions or results

The stacked blocks are the “brain” of the model, where learning and transformation happen.

Internal Flow of a Typical Block

Most AI blocks follow a structured internal pipeline:

Input → Transformation → Enhancement → Output

Example (Transformer Block Flow):

- Input embeddings

- Layer normalization

- Self-attention mechanism

- Add residual connection

- Feed-forward network

- Final normalization

- Output to next block

Each block refines the information step-by-step.

Depth vs Width: How Blocks Affect Model Design

When scaling AI models, two important concepts come into play:

1. Depth (Number of Blocks)

- More blocks stacked vertically

- Helps learn complex hierarchical patterns

2. Width (Size of Each Block)

- More neurons/features inside each block

- Improves representation power

Trade-Off Table:

| Factor | Depth (More Blocks) | Width (Bigger Blocks) |

| Learning | Better hierarchical learning | Better feature richness |

| Speed | Slower training | More memory usage |

| Usage | Deep networks, Transformers | Wide neural networks |

Modern AI balances both depth and width for optimal performance.

How Blocks Enable Transfer Learning

Blocks play a key role in transfer learning, which is widely used today.

How it works:

- Pretrained models already contain stacked blocks

- These blocks have learned general patterns

- You reuse them for new tasks

Example:

- Use existing Transformer blocks trained on large text data

- Fine-tune them for:

- sentiment analysis

- chatbot applications

- summarization

This saves time, cost, and data requirements.

Blocks in Multimodal AI Systems

Modern AI systems often handle multiple data types together (text + image + audio).

How blocks are used:

- Separate blocks process different modalities

- Shared attention blocks combine information

Example Workflow:

- Text → Transformer blocks

- Image → CNN / Vision Transformer blocks

- Fusion → Attention blocks

This is how systems like advanced AI assistants understand complex inputs.

Optimization Techniques Inside Blocks

Blocks are often optimized using advanced techniques:

1. Parameter Sharing

- Same parameters reused across blocks

- Reduces model size

2. Sparse Computation

- Only part of the block is activated

- Improves efficiency

3. Quantization

- Reduces precision of numbers

- Saves memory and speeds up inference

4. Pruning

- Removes unnecessary neurons

- Makes models lighter

These techniques are critical for deploying AI in real-world applications.

How Blocks Are Visualized in AI Diagrams

When reading AI papers or diagrams, blocks are usually shown as:

- Rectangular boxes

- Repeated vertical stacks

- Arrows connecting outputs to inputs

Common diagram pattern:

Input → Block → Block → Block → Output

Once you recognize this pattern, reading research papers becomes much easier.

How Blocks Impact Model Performance

Blocks directly influence:

1. Accuracy

- More refined transformations improve predictions

2. Generalization

- Better blocks reduce overfitting

3. Efficiency

- Optimized blocks reduce computation

4. Scalability

- Easy to expand model size

The design of blocks is often more important than just increasing model size.

Real-World Industry Perspective (2026)

In 2026, companies are focusing on:

Modular AI Systems

- Plug-and-play blocks

- Faster development cycles

AI-as-a-Service Architectures

- Pre-built blocks available via APIs

Custom Domain Blocks

- Healthcare-specific blocks

- Finance-specific blocks

- Retail recommendation blocks

Blocks are becoming standardized components across industries.

How Blocks Enable Scalability in Large AI Models

One of the biggest advantages of using blocks in AI is scalability.

Modern models—especially large language models—are built by simply stacking more blocks. Instead of redesigning the architecture, researchers scale models by:

- Increasing the number of blocks (depth)

- Increasing the size of each block (width)

- Improving block efficiency (optimization techniques)

For example:

- A small model might have 6–12 blocks

- A large model can have 50+ or even hundreds of blocks

This modular scaling approach allows:

- Faster experimentation

- Easier upgrades

- Consistent performance improvements

How Blocks Improve Model Training Stability

Blocks are not just about structure—they also improve training stability.

Techniques used inside blocks include:

1. Normalization Layers

- Keep data distributions stable during training

- Examples: BatchNorm, LayerNorm

2. Skip (Residual) Connections

- Help gradients flow backward efficiently

- Prevent vanishing gradient problems

3. Dropout Layers

- Reduce overfitting

- Improve generalization

These techniques are often bundled into blocks, making them robust and reliable building units.

Block Design Patterns in AI Architecture

Over time, researchers have developed standard block design patterns that are widely reused.

Common Design Patterns:

- Conv → Activation → Normalization (CNN blocks)

- Attention → Add & Norm → MLP → Add & Norm (Transformer blocks)

- Input → Layer → Add (skip connection) (Residual blocks)

These patterns are proven to work well, which is why they are reused across models.

How Engineers Customize Blocks

Blocks are not fixed—they can be customized depending on the problem.

Engineers modify blocks by:

- Changing activation functions (ReLU, GELU, etc.)

- Adjusting layer sizes (hidden units)

- Adding/removing normalization

- Tuning attention mechanisms

- Introducing new connections

This flexibility allows innovation while still keeping the modular structure intact.

Real-World Examples of Blocks in AI Systems

Understanding blocks becomes easier when you see them in action.

1. Chatbots and Language Models

- Built using multiple Transformer blocks

- Each block processes and refines text understanding

2. Image Recognition Systems

- Use convolution + residual blocks

- Detect patterns like edges, shapes, and objects

3. Recommendation Systems

- Use MLP blocks

- Learn user preferences and behavior patterns

4. Image Generation Models

- Use U-Net blocks

- Generate high-quality images step-by-step

Blocks and Model Efficiency (2026 Trend)

In 2026, AI research is focusing heavily on efficient block design.

Instead of just adding more blocks, researchers aim to:

- Make blocks lighter and faster

- Reduce computational cost

- Optimize memory usage

Emerging trends:

- Sparse attention blocks

- Lightweight transformer blocks

- Quantized and compressed blocks

The goal: better performance with fewer resources

Challenges with Block-Based Architectures

While blocks are powerful, they come with challenges:

1. Over-Stacking

Too many blocks can:

- Increase computation cost

- Slow down training

2. Diminishing Returns

Adding more blocks doesn’t always improve performance significantly.

3. Complexity in Optimization

More blocks = more parameters = harder tuning

Best Practices for Using Blocks in AI

If you’re building or learning AI systems, follow these best practices:

Start with standard block architectures

Avoid unnecessary complexity

Monitor performance when increasing blocks

Use pre-trained architectures when possible

Focus on data quality along with model design

Future of Blocks in AI

Blocks will continue to evolve as AI grows.

What to expect:

- More automated block design (AutoML)

- Domain-specific blocks (healthcare, finance, etc.)

- Better integration across multimodal AI systems

- Highly optimized blocks for edge devices

The concept of blocks will remain central, but their design will become smarter and more efficient.

Why Understanding Blocks Helps You Learn AI Faster

Once you understand blocks, everything becomes clearer.

You can:

✔ Read AI architecture diagrams confidently

✔ Understand model descriptions easily

✔ Identify repeating patterns

✔ Connect concepts across domains

Instead of seeing AI as “complex math,”

you start seeing it as structured systems built from reusable components.

Conclusion

So, what is a block in AI?

A block is a reusable building unit—a module made of one or more layers designed to perform a specific function and repeated multiple times to create powerful AI systems.

Whether it’s:

- Residual blocks in vision

- Transformer blocks in language models

- Pipeline blocks in workflows

The idea remains the same: modularity and scalability

Final Takeaway

- Layers = individual operations

- Blocks = reusable patterns

- Models = stacks of blocks

This simple concept is the foundation of modern AI architecture.

FAQ’s

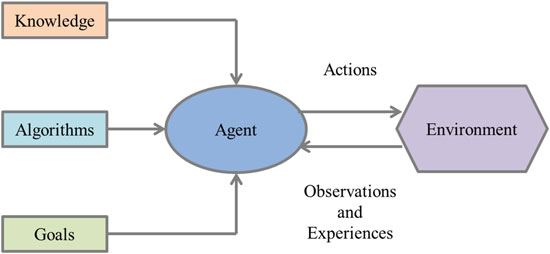

What is an AI block?

An AI block is a modular component or building unit within an AI system, such as a neural network layer or functional module, that performs a specific task in processing data or making decisions.

What are AI building blocks?

AI building blocks are the core components of AI systems, such as data, algorithms, models, neural networks, and computing infrastructure, that work together to enable machines to learn, process information, and make decisions.

What are the building blocks of an AI agent?

The building blocks of an AI agent include perception (data input), decision-making (models/algorithms), memory (knowledge storage), learning (adaptation), and action (output or execution), enabling it to interact with and respond to its environment.

What are the three primary building blocks of AI?

The three primary building blocks of AI are data, algorithms, and computing power, which together enable systems to learn, process information, and make intelligent decisions.

How is block using AI?

AI blocks are used by combining modular components (like neural network layers or functional units) to process data step-by-step, where each block performs a specific task such as feature extraction, transformation, or decision-making within an AI system.