What Is RAG (Retrieval-Augmented Generation)?

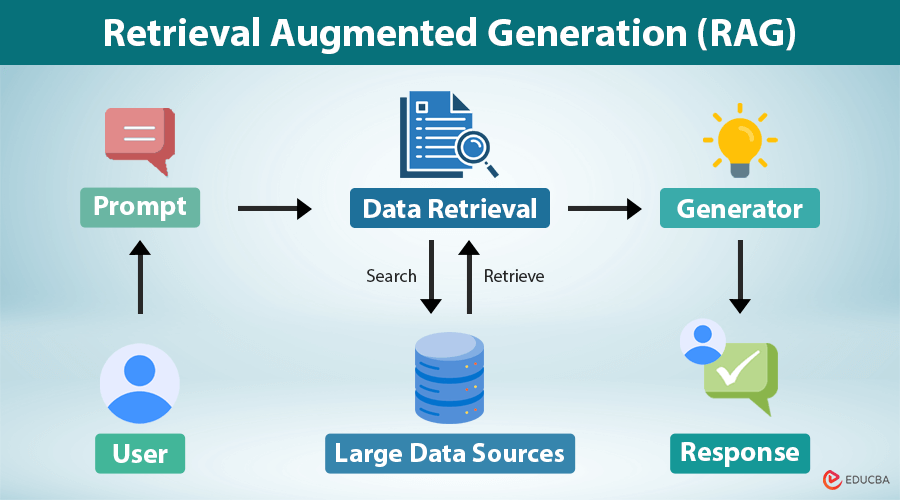

Retrieval-Augmented Generation (RAG) is an AI architecture that combines two key components:

- Retrieval: Finding relevant data from external knowledge sources

- Generation: Using an LLM to generate answers based on that data

Instead of relying only on pre-trained knowledge, RAG systems dynamically fetch relevant information at query time.

Example Workflow:

- User asks: “What is our company’s refund policy?”

- System retrieves: Policy document from internal database

- LLM generates: A clear, accurate answer using that document

Key Benefits:

- More accurate responses

- Reduced hallucinations

- Real-time or updated knowledge

- Ability to use private/internal data

Why RAG Matters

A) Overcomes Knowledge Limitations

LLMs don’t automatically know:

- Your company’s internal documents

- Latest product updates

- New research or regulations

RAG solves this by connecting models to external, up-to-date knowledge sources.

B) Reduces Hallucinations

LLMs may guess answers when unsure. This is risky in:

- Finance

- Healthcare

- Legal systems

RAG improves reliability by grounding answers in retrieved evidence.

C) Better Alternative to Fine-Tuning (for Knowledge Updates)

If your goal is to provide accurate information from documents, RAG is often better than fine-tuning.

- No retraining required

- Faster updates

- Lower cost

How RAG Works (Step-by-Step)

Step 1: Data Ingestion

Collect documents such as:

- PDFs, DOCX, PPT

- Knowledge bases

- Websites

- Databases

Step 2: Chunking

Split documents into smaller pieces:

- Typically 200–800 tokens

- May include overlapping content

Good chunking = better retrieval accuracy

Step 3: Embeddings

Convert text into vectors (numerical representations of meaning).

This enables semantic search, not just keyword matching.

Step 4: Vector Database

Store embeddings in systems like:

- Pinecone

- FAISS

- Weaviate

- Elasticsearch

Step 5: User Query

User asks a question → converted into embedding.

Step 6: Retrieval

System finds the most relevant chunks using similarity search.

Enhancements include:

- Filtering

- Hybrid search (keyword + vector)

- Re-ranking

Step 7: Prompt Augmentation

Retrieved data is added to the LLM prompt.

Example instruction:

- “Answer using only the provided context.”

Step 8: Generation

The LLM generates a final, context-aware answer.

Core Components of a RAG System

A production-grade RAG system includes:

- Document sources

- Chunking strategy

- Embedding model

- Vector database

- Retriever

- Re-ranker (optional)

- Prompt template

- LLM (generator)

- Monitoring & evaluation

The Role of Context in RAG Systems

One of the most critical aspects of a RAG system is how effectively it uses context. Unlike traditional models that rely only on internal knowledge, RAG systems depend heavily on the quality and relevance of the retrieved context. If the retrieved information is accurate and well-structured, the generated response becomes significantly more reliable. However, if irrelevant or noisy data is retrieved, even the most advanced LLM can produce incorrect or misleading answers. This makes context selection and ranking a key factor in the success of any RAG implementation.

Prompt Engineering in RAG

Good prompts dramatically improve output quality.

Example Prompt Template:

You are an AI assistant.

Use ONLY the context below to answer the question.

If the answer is not found, say "I don’t know."

Context:

{retrieved_chunks}

Question:

{user_query}

Advanced Prompt Tips:

- Ask for citations

- Control tone (formal, simple, technical)

- Add constraints (length, format)

Security and Privacy in RAG Systems

RAG introduces data access risks, especially in enterprises.

Key Considerations:

- Role-based access control (RBAC)

- Encryption of stored embeddings

- Secure API access

- Data masking for sensitive fields

- Audit logs for queries

Never expose confidential data through retrieval

RAG as a Foundation for Enterprise AI

In modern enterprises, RAG is becoming the backbone of AI-powered applications. Organizations are using RAG to connect LLMs with internal knowledge bases, enabling secure and context-aware decision-making. This approach allows businesses to scale AI without exposing sensitive data or retraining models repeatedly. As a result, RAG is increasingly viewed not just as a feature, but as a foundational layer in enterprise AI architecture.

Cost Optimization in RAG Systems

RAG systems can become expensive if not optimized.

Cost Drivers:

- Embedding generation

- Vector database storage

- LLM token usage

Optimization Techniques:

- Use smaller embedding models when possible

- Limit retrieved chunks (top-k optimization)

- Cache frequent queries

- Compress context before sending to LLM

RAG vs Knowledge Graphs

Many advanced systems combine both.

| Aspect | RAG | Knowledge Graph |

| Data Type | Unstructured | Structured |

| Strength | Retrieval + generation | Relationships |

| Flexibility | High | Moderate |

| Reasoning | Limited | Strong logical reasoning |

RAG vs Fine-Tuning vs Training

RAG

Best for:

- Knowledge-based Q&A

- Real-time updates

- Document-driven answers

Pros:

- No retraining needed

- Supports citations

- Cost-effective

Cons:

- Depends on retrieval quality

Fine-Tuning

Best for:

- Style control

- Formatting

- Domain-specific behavior

Cons:

- Expensive to update knowledge

- Still prone to hallucination

Training from Scratch

Best for:

- Large-scale AI development

- Custom foundation models

Not practical for most teams

Real-World Use Cases of RAG

Customer Support

- Retrieves FAQs and ticket history

- Provides consistent responses

Internal Knowledge Assistant

- Answers employee queries

- Uses company SOPs and policies

Legal & Compliance

- Retrieves clauses from contracts

- Ensures traceable answers

Healthcare & Research

- Summarizes papers

- Assists in knowledge discovery

Sales Enablement

- Provides product details

- Helps during live customer calls

Developer Support

- Retrieves API documentation

- Explains error codes

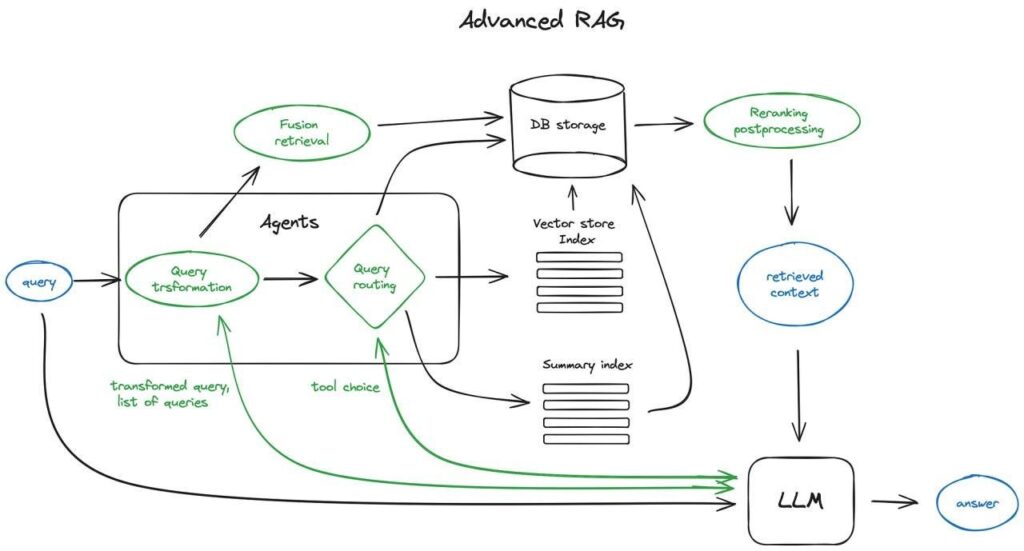

Advanced RAG Techniques

Hybrid Search

Combines keyword + semantic search for better accuracy

Re-Ranking

Improves relevance of retrieved results

Metadata Filtering

Filters based on:

- Document type

- Department

- Date

- Permissions

Query Rewriting

Transforms vague queries into clearer ones

Context Optimization

- Removes irrelevant chunks

- Compresses large context

Common RAG Mistakes

Poor Chunking

- Too small → loses context

- Too large → poor precision

Low-Quality Data

- Outdated or inconsistent documents

No Guardrails

- Model may guess answers

Too Much Context

- Reduces accuracy

No Evaluation

- Leads to unreliable systems

The Future Role of RAG in AI Ecosystems

Looking ahead, RAG is expected to play a central role in the evolution of AI systems. As models become more powerful, the focus will shift toward integrating them with reliable data sources. RAG will act as the bridge between static model knowledge and dynamic real-world information. With advancements in multimodal retrieval and agent-based systems, RAG will continue to evolve as a core component of next-generation AI architectures.

How to Evaluate RAG Systems

Retrieval Metrics

- Recall@k

- Precision@k

Generation Metrics

- Accuracy

- Faithfulness

- Helpfulness

- Citation quality

Future of RAG (2026 Trends)

- Agentic RAG → multi-step reasoning

- Multimodal RAG → text + image + audio

- Graph RAG → knowledge graph integration

- Tool-augmented RAG → API + DB integration

- Secure RAG → permission-aware systems

Conclusion

Retrieval-Augmented Generation (RAG) is one of the most practical and powerful techniques in modern AI. It enhances LLM capabilities by combining external knowledge retrieval with intelligent response generation.

Instead of relying only on pre-trained knowledge, RAG ensures:

- Accurate answers

- Up-to-date information

- Reduced hallucinations

- Better trust and reliability

If you’re building AI systems that need to be accurate, explainable, and scalable, RAG should be your starting point.

FAQ’s

What is the purpose of a RAG?

The purpose of RAG (Retrieval-Augmented Generation) is to enhance AI responses by retrieving relevant external data and combining it with language generation, improving accuracy, context, and up-to-date information.

What are the 7 types of RAG?

The seven common types of RAG include Naive RAG, Retrieval-Augmented RAG, Generative RAG, Hybrid RAG, Multi-Modal RAG, Conversational RAG, and Agentic RAG, each differing in how they retrieve and generate information.

What is augmented means in RAG?

In RAG (Retrieval-Augmented Generation), “augmented” means enhancing the AI’s response by adding relevant external data or retrieved information, making the output more accurate, contextual, and up-to-date.

What is RAG vs LLM?

RAG (Retrieval-Augmented Generation) vs LLM: an LLM generates responses based on its pre-trained knowledge, while RAG enhances an LLM by retrieving external, real-time information to produce more accurate and up-to-date answers.

What is the difference between RAG and generative AI?

The difference between RAG and generative AI is that generative AI creates content using pre-trained knowledge, while RAG (Retrieval-Augmented Generation) enhances generative AI by retrieving external data to improve accuracy and provide up-to-date, context-aware responses.